Main Types of Gradient Descent | Batch, Stochastic and Mini-Batch Explained! | Which One to Choose?

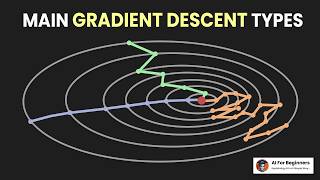

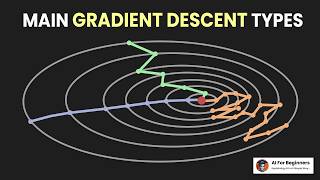

🔥 There are three main types of gradient descent: Batch, Stochastic and Mini-Batch. Batch gradient descent takes all observations for gradient computation, which is both accurate and resource heavy. Stochastic takes only one random observation from the data which is a poor approximation but introduces randomness. Mini-Batch is the mix of two, takes a random sample from the data.

Each type has its own advantages and disadvantages. Batch gradient descent requires more resources and converges confidently to a minima (sometimes to a local minima), while stochastic converges faster due to frequent updates but fails to converge to exact minima (hovers around it). Additionally, randomness can help explore the parameter space even deeper. Mini-Batch is a compromise among those two and the most popular one! Remember, each problem has a separate approach, experiment to see which one works best for you!

🔍 Key points covered:

0:00 - Introduction.

0:10 - Batch gradient descent.

0:20 - Stochastic gradient descent.

0:34 - Mini-batch gradient descent.

0:48 - Batch gradient descent pros and cons.

1:21 - Stochastic gradient descent pros and cons.

1:51 - Mini-batch gradient descent pros and cons.

2:10 - Subscribe to us!

🔔 Don't forget to like, subscribe, and hit the bell icon to stay updated with our latest videos!

🤖 Note that we use synthetic generations, such as AI-generated images and voices, to enhance the appeal and engagement of our content.

🌐 If you have any questions or topics you want us to cover, leave a comment below. Additionally, share with your thoughts about the content, how do you think we can make them better? Thanks for watching!

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

Playlist

Uploads from AI For Beginners · AI For Beginners · 14 of 32

1

2

2

3

3

4

4

5

5

6

6

7

7

8

8

9

9

10

10

11

11

12

12

13

13

▶

▶

15

15

16

16

17

17

18

18

19

19

20

20

21

21

22

22

23

23

24

24

25

25

26

26

27

27

28

28

29

29

30

30

31

31

32

32

Artificial Intelligence Explained In Simple Words | What Is AI? | Explained On A Real World Example!

AI For Beginners

AI vs. ML vs. DL vs. DS - Difference Explained | On Real World Examples | AI For Beginners

AI For Beginners

Types Of Machine Learning Algorithms | Explained On Real World Examples | ML For Beginners

AI For Beginners

Best AI Music Generator | Music Generation Tool for FREE | MusicGen developed by Meta AI

AI For Beginners

The Ultimate Guide To Supervised Learning | Explained On Binary Classification Example | Part 1

AI For Beginners

The Ultimate Guide To Supervised Learning | Classification And Regression | Part 2

AI For Beginners

Linear Regression Explained | A Beginner's Guide To Regression | The Basics You Need to Know!

AI For Beginners

Assumptions Of Linear Regression | What To Do If The Assumptions Do Not Hold? | Part 1

AI For Beginners

Checking The Assumptions Of Linear Regression | Statistical And Visual Methods | Part 2

AI For Beginners

The Purpose of Train-Test Split in Machine Learning | How to Correctly Split Data?

AI For Beginners

The Role of Validation Sets in Model Training | Train-Test-Validation Splits | Clearly explained!

AI For Beginners

Overfitting and Underfitting | Bias and Variance Tradeoff in Machine Learning | Clearly Explained!

AI For Beginners

Gradient Descent Explained | How Do ML and DL Models Learn? | Simple Explanation!

AI For Beginners

Main Types of Gradient Descent | Batch, Stochastic and Mini-Batch Explained! | Which One to Choose?

AI For Beginners

The Role of Loss Functions | Most Common Loss Functions in Machine Learning | Explained!

AI For Beginners

How to Evaluate Your ML Models Effectively? | Evaluation Metrics in Machine Learning!

AI For Beginners

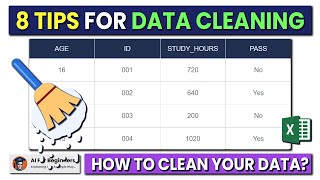

8 Best Tips For Cleaning Your Data | Data Cleaning | Machine Learning, Data Preparation.

AI For Beginners

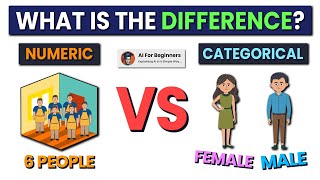

Numerical vs. Categorical Data | Represent Your Dataset Correctly!

AI For Beginners

3 Main Types of Missing Data | Do THIS Before Handling Missing Values!

AI For Beginners

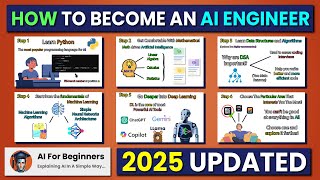

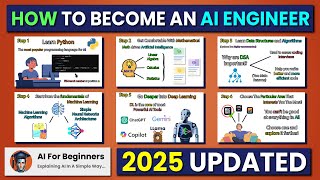

7 PROVEN Strategies To Become An AI Engineer (2025 Updated)

AI For Beginners

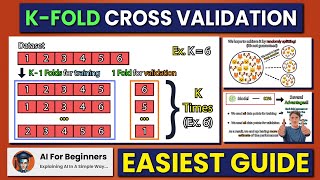

Easiest Guide to K-Fold Cross Validation | Explained in 2 Minutes!

AI For Beginners

Normalization and Standardization | Why to Scale the Features? | ML Basics

AI For Beginners

The Ultimate Guide to Hyperparameter Tuning | Grid Search vs. Randomized Search

AI For Beginners

How is Artificial Intelligence different from Traditional Programming?

AI For Beginners

All Machine Learning Models Clearly Explained!

AI For Beginners

6 Mistakes to Avoid When Learning Machine Learning in 2025

AI For Beginners

Best Practices for Effective Data Visualization In Machine Learning!

AI For Beginners

Central Limit Theorem Intuition Explained Like You're 5!

AI For Beginners

Which Door Would You Choose? | Monty Hall Problem Explained!

AI For Beginners

All Machine Learning Concepts Explained in 18 Minutes!

AI For Beginners

What’s the Probability That Two Randomly Drawn Chords in a Circle Intersect?

AI For Beginners

Causation vs Correlation | The Most Confused Concept in Data Science

AI For Beginners

More on: ML Maths Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

What a GPU Actually Is (and Why ML Stole It)

Dev.to AI

Python Sets: One of the Most Powerful Data Structures Beginners Often Ignore

Medium · Python

Bigger AI models aren't always better. Here's how to actually choose.

Dev.to · Rohini Gaonkar

Nobody Knows What The Beach Is Saying. And That’s The Point.

Medium · Deep Learning

Chapters (8)

Introduction.

0:10

Batch gradient descent.

0:20

Stochastic gradient descent.

0:34

Mini-batch gradient descent.

0:48

Batch gradient descent pros and cons.

1:21

Stochastic gradient descent pros and cons.

1:51

Mini-batch gradient descent pros and cons.

2:10

Subscribe to us!

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI