Why OpenAI Embeddings Still Work Even After You Truncate Them

Why do OpenAI embeddings still work even after you truncate a large number of dimensions?

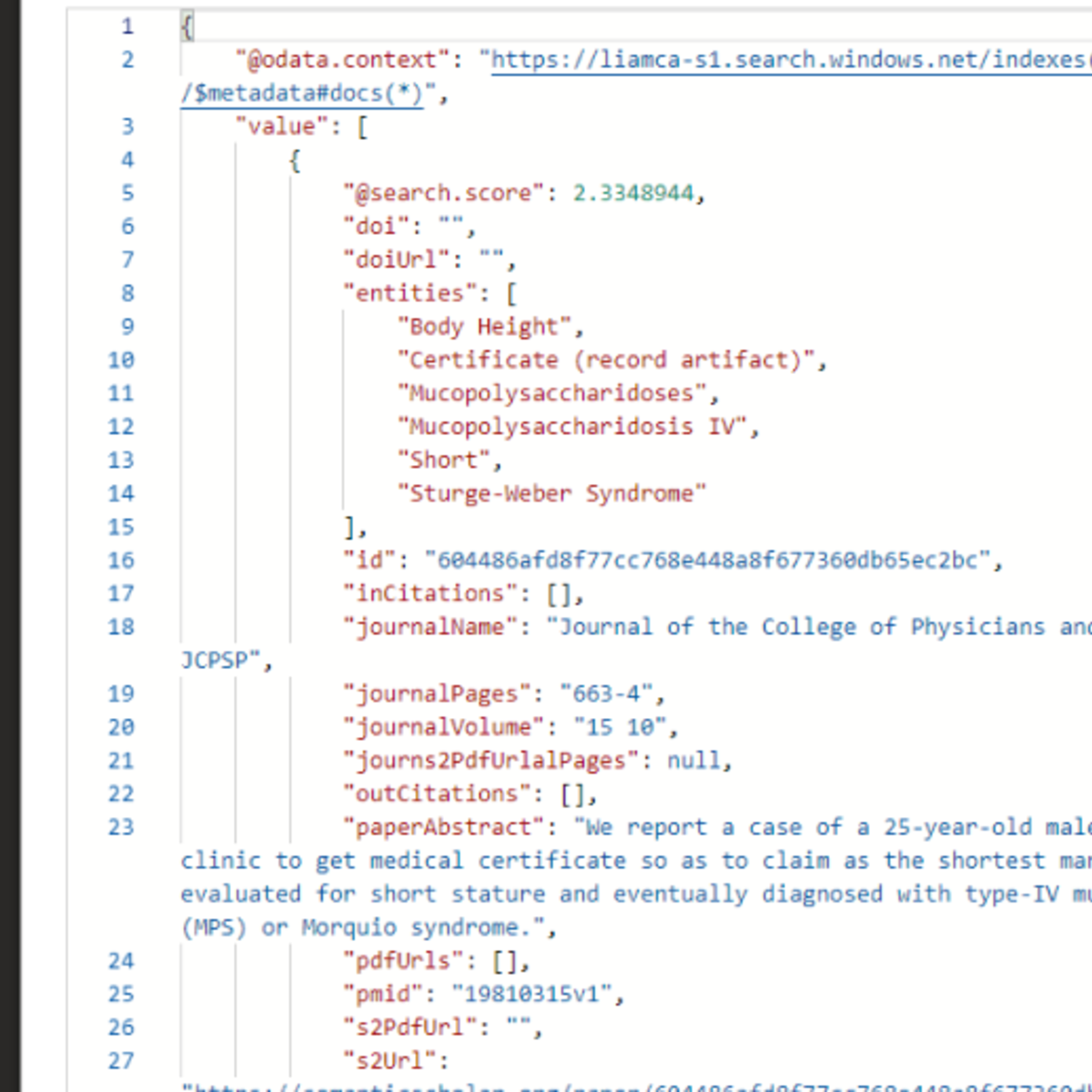

In this video, we explore Matryoshka Representation Learning (MRL), a training technique that allows embeddings to remain useful even when you use only a prefix of the full vector. This makes it possible to trade off accuracy, memory usage, and retrieval latency at inference time.

We first look at how embeddings are normally trained using contrastive learning, and why standard embedding models do not guarantee that truncated vectors will work well. Then we see how Matryoshka Representation Learning modifies the training loss to make smaller prefixes of the embedding independently useful.

Finally, we look at results from the original MRL paper and experiments with modern embedding models to understand how truncation affects retrieval performance.

This idea is especially useful for systems that rely on vector search, semantic retrieval, and RAG (Retrieval Augmented Generation).

⏱️ Timestamps:

00:00 Truncated OpenAI Embeddings Still Work

00:46 The Idea Behind Matryoshka Embeddings

02:03 How Embeddings Are Normally Trained (Contrastive Learning)

03:28 How Matryoshka Representation Learning Changes the Loss

05:16 Why Matryoshka Embeddings Are Useful for Vector Search & RAG

06:21 Results from the MRL Paper

📖 Resources:

Matryoshka Representation Learning Paper - https://arxiv.org/pdf/2205.13147

Weaviate BlogPost - https://weaviate.io/blog/openais-matryoshka-embeddings-in-weaviate

Weaviate Podcast on Matryoshka Representation Learning(with one of the authors) - https://www.youtube.com/watch?v=-0m2dZJ6zos

🔔 Subscribe :

https://tinyurl.com/exai-channel-link

📌 Keywords:

#openai #embeddings #vectorsearch #retrievalaugmentedgeneration

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: RAG Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

Why StarRocks Is Better Than Elasticsearch for RAG and AI-Powered Vector Search Analytics

Medium · LLM

Production RAG: Shipping a RAG System Into an Enterprise Product

Medium · RAG

HyDE: Search With the Answer You Wish You Had

Medium · RAG

Hierarchical Indices: Find the Section First, Then Find the Sentence

Medium · RAG

Chapters (6)

Truncated OpenAI Embeddings Still Work

0:46

The Idea Behind Matryoshka Embeddings

2:03

How Embeddings Are Normally Trained (Contrastive Learning)

3:28

How Matryoshka Representation Learning Changes the Loss

5:16

Why Matryoshka Embeddings Are Useful for Vector Search & RAG

6:21

Results from the MRL Paper

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI