A Better, Cheaper RAG (Neuro-Sym Multi-hop Reasoning)

Skills:

RAG Basics90%

TGS-RAG (Text-Graph Synergy)

TGS-RAG establishes a non-linear, bidirectional coupling between the continuous (text vectors) and the discrete (graph topology).

Classical AI suggested that the only way to solve multi-hop reasoning over complex unstructured documents was to employ brute-force global indexing: generating costly hierarchical community summaries across the entire graph.

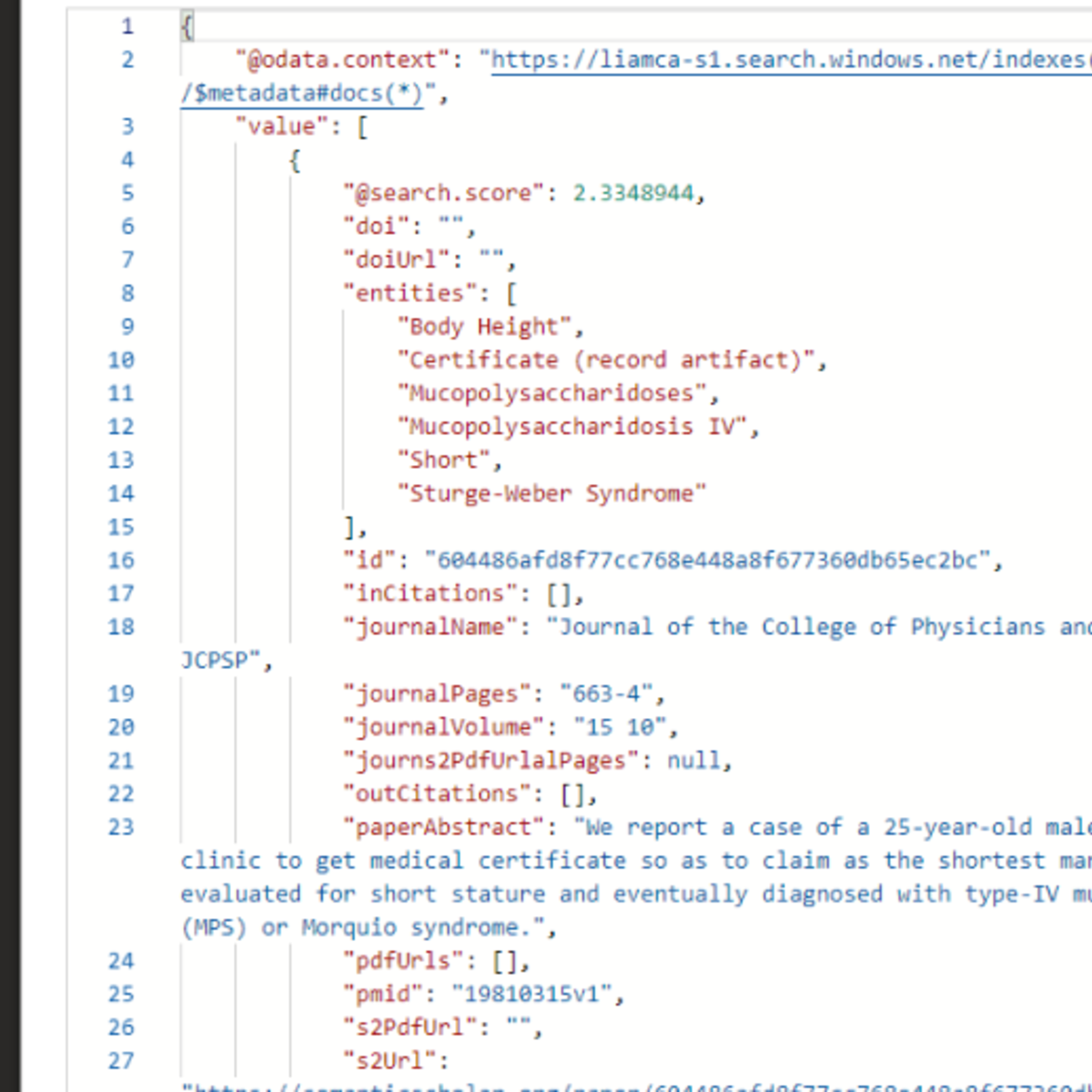

The primary Delta of TGS-RAG is proving that global graph computation is a wildly inefficient hammer. By identifying that dense semantic search and structured graph traversal fail in diametrically orthogonal ways (the former via false-positive spatial traps, the latter via false-negative search-time pruning - details explained in video) the authors demonstrate that they can be used to dynamically self-correct one another at inference time.

Treating beam-search pruning not as a permanent truncation, but as a cached topological superposition state that collapses to reality only when textually observed (!), provides a scalable, computationally lightweight foundation for integrating symbolic reasoning with neural representation.

All rights w/ authors:

Text-Graph Synergy: A Bidirectional Verification and Completion Framework for RAG

by

Jiarui Zhong , Hong Cai Chen∗

from

School of Automation, Southeast University, Nanjing 210096, China

#aiexplained

#scienceexplained

#chatgpt

#topology

#vectorspace

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: RAG Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

Production RAG: Shipping a RAG System Into an Enterprise Product

Medium · RAG

HyDE: Search With the Answer You Wish You Had

Medium · RAG

Hierarchical Indices: Find the Section First, Then Find the Sentence

Medium · RAG

Hybrid Search Explained: Why Smart RAG Uses Both Keywords AND Meaning

Medium · Machine Learning

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI