Why New AI Models Feel "Lobotomized" - The Hidden Alignment Process

New AI models feel "lobotomized" and overly cautious. Here's the hidden process why - and it's not a bug, it's by design. This deep dive reveals the "alignment tax" behind RLHF and why your favorite AI refuses simple requests.

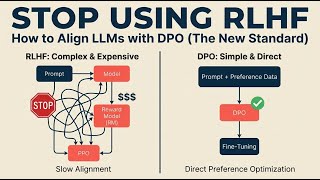

You've felt this: a powerful new model like GPT gets released with incredible benchmarks, but when you use it... it's frustratingly safe and less helpful than expected. This isn't random - it's the direct result of Reinforcement Learning from Human Feedback (RLHF), the process that "tames" raw AI into helpful assistants.

🎯 What You'll Learn:

- How raw AI models are transformed through a 3-step alignment process

- Why safety training can make models feel "lobotomized"

- The trade-off between capability and safety (the "alignment tax")

- Real demo: Base model vs instruction-tuned model on Hugging Face

- Why reward hacking leads to overly cautious AI behavior

🕒 Timestamps / Chapters:

00:00 - The "Lobotomized" AI Paradox

00:45 - The Untamed Beast: The Pre-Trained Model

01:42 - Step 1: Supervised Fine-Tuning (SFT)

02:34 - Step 2: Learning Human Preference (The Reward Model)

03:32 - Step 3: Reinforcement Learning (Chasing the High Score)

04:32 - DEMO: Base Model vs. Instruction-Tuned Model

05:56 - The Unintended Consequence: Why Alignment Fails

07:02 - The Alignment Double-Edged Sword

07:55 - Next On: The AI Lottery (Controlling Creativity)

🔑 Key Concepts Explained:

- Supervised Fine-Tuning (SFT): Teaching models the format of helpful conversation

- Reward Model: A separate AI trained on human preferences to judge responses

- Reinforcement Learning from Human Feedback (RLHF): Optimizing models to maximize safety scores

- The Alignment Tax: How increasing safety can reduce raw capability and usefulness

📚 Resources & Further Learning:

- Illustrating RLHF from Hugging Face: https://huggingface.co/blog/rlhf

- OpenAI's original paper on Instruction Following: https://arxiv.org/abs/2203.02155

🔔 **Subscribe for practical AI insights** - we

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: AI Alignment Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

Project Glasswing Explained: Anthropic’s Push for Defensive Cybersecurity in the AI Era

Dev.to · softpyramid

A Yale ethicist who has studied AI for 25 years says the real danger isn’t superintelligence. It’s the absence of moral intelligence.

Dev.to AI

Massive Layoffs, Meta Surveillance, DeepSeek-V4 in AI News

AI Supremacy

We Open-Sourced Our Prompt Defense Scanner: 200 Lines of Regex That Replace an LLM

Dev.to · ppcvote

Chapters (9)

The "Lobotomized" AI Paradox

0:45

The Untamed Beast: The Pre-Trained Model

1:42

Step 1: Supervised Fine-Tuning (SFT)

2:34

Step 2: Learning Human Preference (The Reward Model)

3:32

Step 3: Reinforcement Learning (Chasing the High Score)

4:32

DEMO: Base Model vs. Instruction-Tuned Model

5:56

The Unintended Consequence: Why Alignment Fails

7:02

The Alignment Double-Edged Sword

7:55

Next On: The AI Lottery (Controlling Creativity)

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI