Unlock Multimodal Search

"Unlock Multimodal Search" is an intermediate, hands-on course for developers and ML engineers ready to build the next generation of AI-powered search. Text-only search is no longer enough; this 90-minute course will teach you how to create applications that can search across different data types, such as finding text from an image. Using the powerful open-source vector database Weaviate, you will move from theory to a functioning demonstration. This course requires basic skills in Docker, APIs, Python, and the command line (CLI). Familiarity with vector databases. Docker Desktop must be installed.

This course is focused on execution. You will learn to configure a Weaviate schema to handle both image and text embeddings for a single object, ingest multimodal data, and perform powerful cross-modal queries. Through a final, hands-on project that mirrors a real-world job task, you will not only build an image-to-text search demo but also learn how to measure its accuracy with precision metrics. By the end, you'll be equipped to architect and validate sophisticated, multimodal AI applications.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

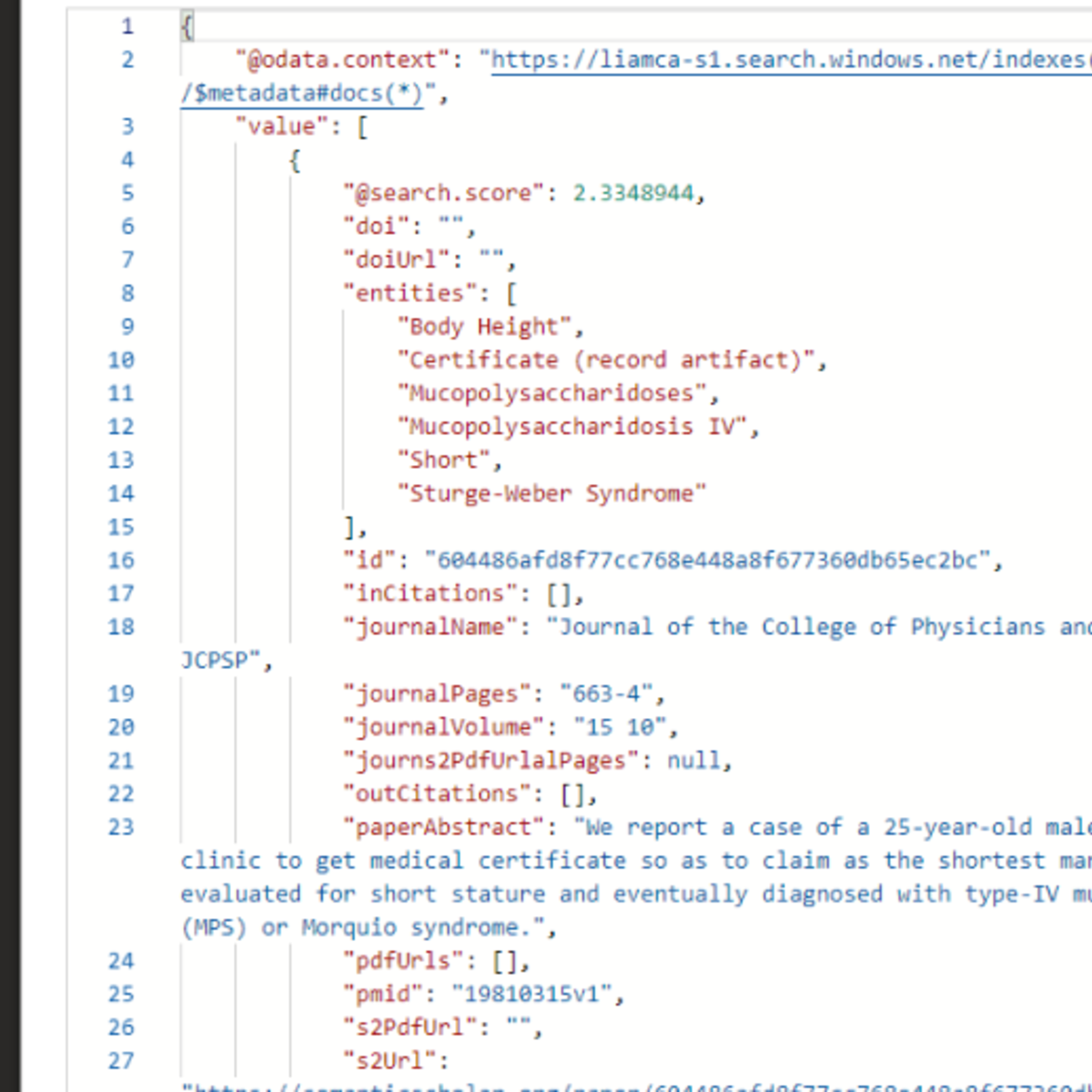

More on: RAG Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

The Future of RAG: Dead, Evolving… or Becoming the Brain of AI?

Medium · Machine Learning

Smart Routing, Transfer Family Ingestion, and Voice Chat — Permission-Aware RAG v4.2

Dev.to · Yoshiki Fujiwara(藤原 善基)@AWS Community Builder

Most Companies Doing GenAI Are Really Just Doing RAG: RAGOps Explained for analysts

Medium · RAG

RAG - Sliding Window, Token Based Chunking and PDF Chunking Packages

Dev.to AI

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI