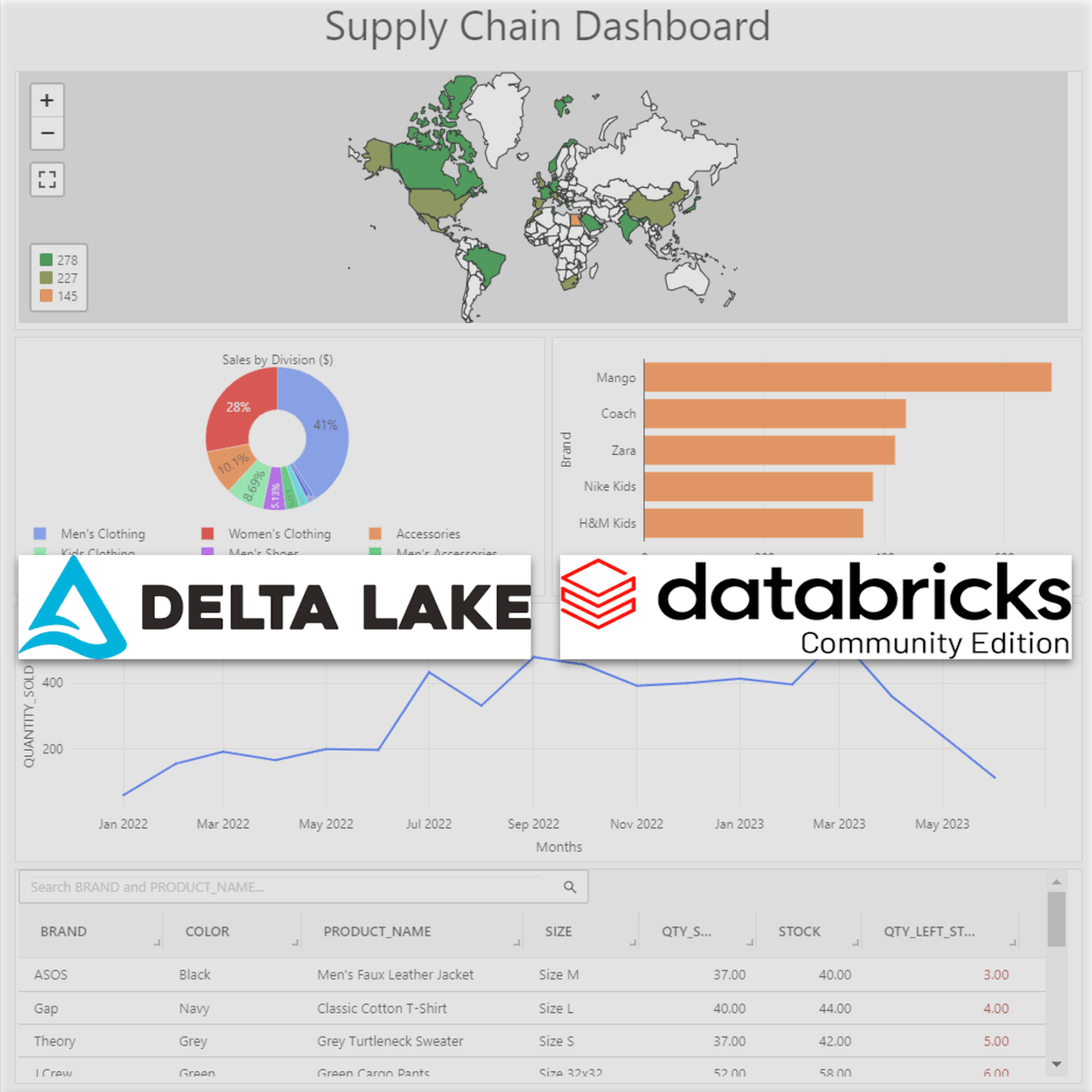

Stream & Unify Data Schemas with CDC

Imagine deploying schema changes with confidence—knowing your pipeline will handle them gracefully, consumers will stay healthy, and your data will stay consistent. That's the difference between hoping your CDC pipeline works and knowing it will. In this course you will learn how to build a working, vendor‑neutral CDC pipeline and a single, unified table from evolving source schemas. Starting with Debezium streaming changes from Postgres/MySQL into Kafka, you will use Schema Registry to enforce compatibility, then apply streaming SQL in Flink (or ksqlDB) to map, cast, and merge divergent fields into a canonical model. Finally, you will persist results to an Apache Iceberg table and query it instantly with Trino. Along the way, you’ll learn practical strategies to manage schema drift, choose compatibility modes (backward/full), and avoid breaking downstream consumers. Everything runs locally with Docker so you can reproduce it anywhere and take the same patterns to your cloud stack later.

This course is designed for engineers working with Kafka, Debezium, and streaming SQL who need reliable schema evolution and canonical modeling skills.

Learners should be familiar with Basic SQL, Docker, and familiarity with Kafka or streaming concepts.

By the end of the course,you will be able to implement a small end‑to‑end CDC pipeline that streams from a source DB and unifies evolving schemas into a single queryable table.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: Data Warehousing

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

Operational continuity is not governability.

Medium · Deep Learning

AI gave North Korean hackers a $600 million month. DeFi is still working out how to respond.

The Next Web AI

The Fallacy of Vibe-Driven Development: A Critical Look at AI Scaling

Dev.to · Aneesha Prasannan

New Jersey’s 2026 AI Push

Dev.to AI

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI