Scaling Test Time Compute to Multi-Agent Civilizations — Noam Brown, OpenAI

Solving Poker and Diplomacy, Debating RL+Reasoning with Ilya, what's *wrong* with the System 1/2 analogy, and where Test-Time Compute hits a wall

Timestamps

00:00 Intro – Diplomacy, Cicero & World Championship

02:00 Reverse Centaur: How AI Improved Noam’s Human Play

05:00 Turing Test Failures in Chat: Hallucinations & Steerability

07:30 Reasoning Models & Fast vs. Slow Thinking Paradigm

11:00 System 1 vs. System 2 in Visual Tasks (GeoGuessr, Tic-Tac-Toe)

14:00 The Deep Research Existence Proof for Unverifiable Domains

17:30 Harnesses, Tool Use, and Fragility in AI Agents

21:00 The Case Against Over-Reliance on Scaffolds and Routers

24:00 Reinforcement Fine-Tuning and Long-Term Model Adaptability

28:00 Ilya’s Bet on Reasoning and the O-Series Breakthrough

34:00 Noam’s Dev Stack: Codex, Windsurf & AGI Moments

38:00 Building Better AI Developers: Memory, Reuse, and PR Reviews

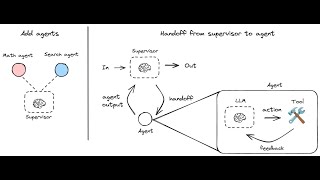

41:00 Multi-Agent Intelligence and the “AI Civilization” Hypothesis

44:30 Implicit World Models and Theory of Mind Through Scaling

48:00 Why Self-Play Breaks Down Beyond Go and Chess

54:00 Designing Better Benchmarks for Fuzzy Tasks

57:30 The Real Limits of Test-Time Compute: Cost vs. Time

1:00:30 Data Efficiency Gaps Between Humans and LLMs

1:03:00 Training Pipeline: Pretraining, Midtraining, Posttraining

1:05:00 Games as Research Proving Grounds: Poker, MTG, Stratego

1:10:00 Closing Thoughts – Five-Year View and Open Research Directions

Chapters

00:00:00 Intro & Guest Welcome

00:00:33 Diplomacy AI & Cicero Insights

00:03:49 AI Safety, Language Models, and Steerability

00:05:23 O Series Models: Progress and Benchmarks

00:08:53 Reasoning Paradigm: Thinking Fast and Slow in AI

00:14:02 Design Questions: Harnesses, Tools, and Test Time Compute

00:20:32 Reinforcement Fine-tuning & Model Specialization

00:21:52 The Rise of Reasoning Models at OpenAI

00:29:33 Data Efficiency in Machine Learning

00:33:21 Coding & AI: Codex, Workflows, and Developer Experience

00:41:38 Multi-Age

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

Playlist

Uploads from Latent Space · Latent Space · 0 of 60

← Previous

Next →

1

2

2

3

3

4

4

5

5

6

6

7

7

8

8

9

9

10

10

11

11

12

12

13

13

14

14

15

15

16

16

17

17

18

18

19

19

20

20

21

21

22

22

23

23

24

24

25

25

26

26

27

27

28

28

29

29

30

30

31

31

32

32

33

33

34

34

35

35

36

36

![[LLM Paper Club] Llama 3.1 Paper: The Llama Family of Models](https://i.ytimg.com/vi/TgLSYIBoX5U/mqdefault.jpg) 37

37

38

38

39

39

40

40

41

41

42

42

43

43

44

44

45

45

46

46

47

47

48

48

49

49

50

50

51

51

![[Paper Club] 🍓 On Reasoning: Q-STaR and Friends!](https://i.ytimg.com/vi/Y5-FeaFOEFM/mqdefault.jpg) 52

52

![[Paper Club] Writing in the Margins: Chunked Prefill KV Caching for Long Context Retrieval](https://i.ytimg.com/vi/VHwrhL_MSV4/mqdefault.jpg) 53

53

54

54

55

55

56

56

57

57

58

58

59

59

![[Paper Club] Who Validates the Validators? Aligning LLM-Judges with Humans (w/ Eugene Yan)](https://i.ytimg.com/vi/4o_ic83U1Kw/mqdefault.jpg) 60

60

Ep 18: Petaflops to the People — with George Hotz of tinycorp

Latent Space

FlashAttention-2: Making Transformers 800% faster AND exact

Latent Space

RWKV: Reinventing RNNs for the Transformer Era

Latent Space

Generating your AI Media Empire - with Youssef Rizk of Wondercraft.ai

Latent Space

RAG is a hack - with Jerry Liu of LlamaIndex

Latent Space

The End of Finetuning — with Jeremy Howard of Fast.ai

Latent Space

Why AI Agents Don't Work (yet) - with Kanjun Qiu of Imbue

Latent Space

Powering your Copilot for Data - with Artem Keydunov from Cube.dev

Latent Space

Beating GPT-4 with Open Source Models - with Michael Royzen of Phind

Latent Space

The State of Silicon and the GPU Poors - with Dylan Patel of SemiAnalysis

Latent Space

The "Normsky" architecture for AI coding agents — with Beyang Liu + Steve Yegge of SourceGraph

Latent Space

The AI-First Graphics Editor - with Suhail Doshi of Playground AI

Latent Space

The Accidental AI Canvas - with Steve Ruiz of tldraw

Latent Space

The Origin and Future of RLHF: the secret ingredient for ChatGPT - with Nathan Lambert

Latent Space

The Four Wars of the AI Stack - Dec 2023 Recap

Latent Space

The State of AI in production — with David Hsu of Retool

Latent Space

Building an open AI company - with Ce and Vipul of Together AI

Latent Space

Truly Serverless Infra for AI Engineers - with Erik Bernhardsson of Modal

Latent Space

A Brief History of the Open Source AI Hacker - with Ben Firshman of Replicate

Latent Space

Open Source AI is AI we can Trust — with Soumith Chintala of Meta AI

Latent Space

Making Transformers Sing - with Mikey Shulman of Suno

Latent Space

A Comprehensive Overview of Large Language Models - Latent Space Paper Club

Latent Space

Why Google failed to make GPT-3 -- with David Luan of Adept

Latent Space

Personal AI Meetup - Bee, BasedHardware, LangChain LangFriend, Deepgram EmilyAI

Latent Space

Supervise the Process of AI Research — with Jungwon Byun and Andreas Stuhlmüller of Elicit

Latent Space

Breaking down the OG GPT Paper by Alec Radford

Latent Space

High Agency Pydantic over VC Backed Frameworks — with Jason Liu of Instructor

Latent Space

This World Does Not Exist — Joscha Bach, Karan Malhotra, Rob Haisfield (WorldSim, WebSim, Liquid AI)

Latent Space

LLM Asia Paper Club Survey Round

Latent Space

How to train a Million Context LLM — with Mark Huang of Gradient.ai

Latent Space

How AI is Eating Finance - with Mike Conover of Brightwave

Latent Space

How To Hire AI Engineers (ft. James Brady and Adam Wiggins of Elicit)

Latent Space

State of the Art: Training 70B LLMs on 10,000 H100 clusters

Latent Space

The 10,000x Yolo Researcher Metagame — with Yi Tay of Reka

Latent Space

Training Llama 2, 3 & 4: The Path to Open Source AGI — with Thomas Scialom of Meta AI

Latent Space

![[LLM Paper Club] Llama 3.1 Paper: The Llama Family of Models](https://i.ytimg.com/vi/TgLSYIBoX5U/mqdefault.jpg)

[LLM Paper Club] Llama 3.1 Paper: The Llama Family of Models

Latent Space

Synthetic data + tool use for LLM improvements 🦙

Latent Space

RLHF vs SFT to break out of local maxima 📈

Latent Space

The Winds of AI Winter (Q2 Four Wars of the AI Stack Recap)

Latent Space

Segment Anything 2: Memory + Vision = Object Permanence — with Nikhila Ravi and Joseph Nelson

Latent Space

Answer.ai & AI Magic with Jeremy Howard

Latent Space

Is finetuning GPT4o worth it?

Latent Space

Personal benchmarks vs HumanEval - with Nicholas Carlini of DeepMind

Latent Space

Building AGI with OpenAI's Structured Outputs API

Latent Space

Q* for model distillation 🍓

Latent Space

Finetuning LoRAs on BILLIONS of tokens 🤖

Latent Space

Cursor UX team is CRACKED 💻

Latent Space

Choosing the BEST OpenAI model 🏆

Latent Space

How will OpenAI voice mode change API design?

Latent Space

STEALING OpenAI models data 🥷

Latent Space

![[Paper Club] 🍓 On Reasoning: Q-STaR and Friends!](https://i.ytimg.com/vi/Y5-FeaFOEFM/mqdefault.jpg)

[Paper Club] 🍓 On Reasoning: Q-STaR and Friends!

Latent Space

![[Paper Club] Writing in the Margins: Chunked Prefill KV Caching for Long Context Retrieval](https://i.ytimg.com/vi/VHwrhL_MSV4/mqdefault.jpg)

[Paper Club] Writing in the Margins: Chunked Prefill KV Caching for Long Context Retrieval

Latent Space

The Ultimate Guide to Prompting - with Sander Schulhoff from LearnPrompting.org

Latent Space

llm.c's Origin and the Future of LLM Compilers - Andrej Karpathy at CUDA MODE

Latent Space

Prompt Engineer is NOT a job 📝

Latent Space

Prompt Mining LLMs for better prompts ⛏️

Latent Space

The six pillars of few-shot prompting 🔧

Latent Space

Language Agents: From Reasoning to Acting — with Shunyu Yao of OpenAI, Harrison Chase of LangGraph

Latent Space

![[Paper Club] Who Validates the Validators? Aligning LLM-Judges with Humans (w/ Eugene Yan)](https://i.ytimg.com/vi/4o_ic83U1Kw/mqdefault.jpg)

[Paper Club] Who Validates the Validators? Aligning LLM-Judges with Humans (w/ Eugene Yan)

Latent Space

Can you separate intelligence and knowledge?

Latent Space

More on: Multi-Agent Systems

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

The Context Layer: Why Enterprise AI Agents Fail Without It — and What It Actually Takes to Fix That

Dev.to · Swapnil Chougule

Comparing 6 AI Routers Is a Mistake — Until You Define ‘Survived’

Medium · AI

Comparing 6 AI Routers Is a Mistake — Until You Define ‘Survived’

Medium · Programming

What if an AI continued thinking even after you closed the chat?

Dev.to · Stell

Chapters (32)

Intro – Diplomacy, Cicero & World Championship

2:00

Reverse Centaur: How AI Improved Noam’s Human Play

5:00

Turing Test Failures in Chat: Hallucinations & Steerability

7:30

Reasoning Models & Fast vs. Slow Thinking Paradigm

11:00

System 1 vs. System 2 in Visual Tasks (GeoGuessr, Tic-Tac-Toe)

14:00

The Deep Research Existence Proof for Unverifiable Domains

17:30

Harnesses, Tool Use, and Fragility in AI Agents

21:00

The Case Against Over-Reliance on Scaffolds and Routers

24:00

Reinforcement Fine-Tuning and Long-Term Model Adaptability

28:00

Ilya’s Bet on Reasoning and the O-Series Breakthrough

34:00

Noam’s Dev Stack: Codex, Windsurf & AGI Moments

38:00

Building Better AI Developers: Memory, Reuse, and PR Reviews

41:00

Multi-Agent Intelligence and the “AI Civilization” Hypothesis

44:30

Implicit World Models and Theory of Mind Through Scaling

48:00

Why Self-Play Breaks Down Beyond Go and Chess

54:00

Designing Better Benchmarks for Fuzzy Tasks

57:30

The Real Limits of Test-Time Compute: Cost vs. Time

1:00:30

Data Efficiency Gaps Between Humans and LLMs

1:03:00

Training Pipeline: Pretraining, Midtraining, Posttraining

1:05:00

Games as Research Proving Grounds: Poker, MTG, Stratego

1:10:00

Closing Thoughts – Five-Year View and Open Research Directions

Intro & Guest Welcome

0:33

Diplomacy AI & Cicero Insights

3:49

AI Safety, Language Models, and Steerability

5:23

O Series Models: Progress and Benchmarks

8:53

Reasoning Paradigm: Thinking Fast and Slow in AI

14:02

Design Questions: Harnesses, Tools, and Test Time Compute

20:32

Reinforcement Fine-tuning & Model Specialization

21:52

The Rise of Reasoning Models at OpenAI

29:33

Data Efficiency in Machine Learning

33:21

Coding & AI: Codex, Workflows, and Developer Experience

41:38

Multi-Age

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI