RunwayML Gen 1 vs Automatic1111 - Video creation with StableDiffusion

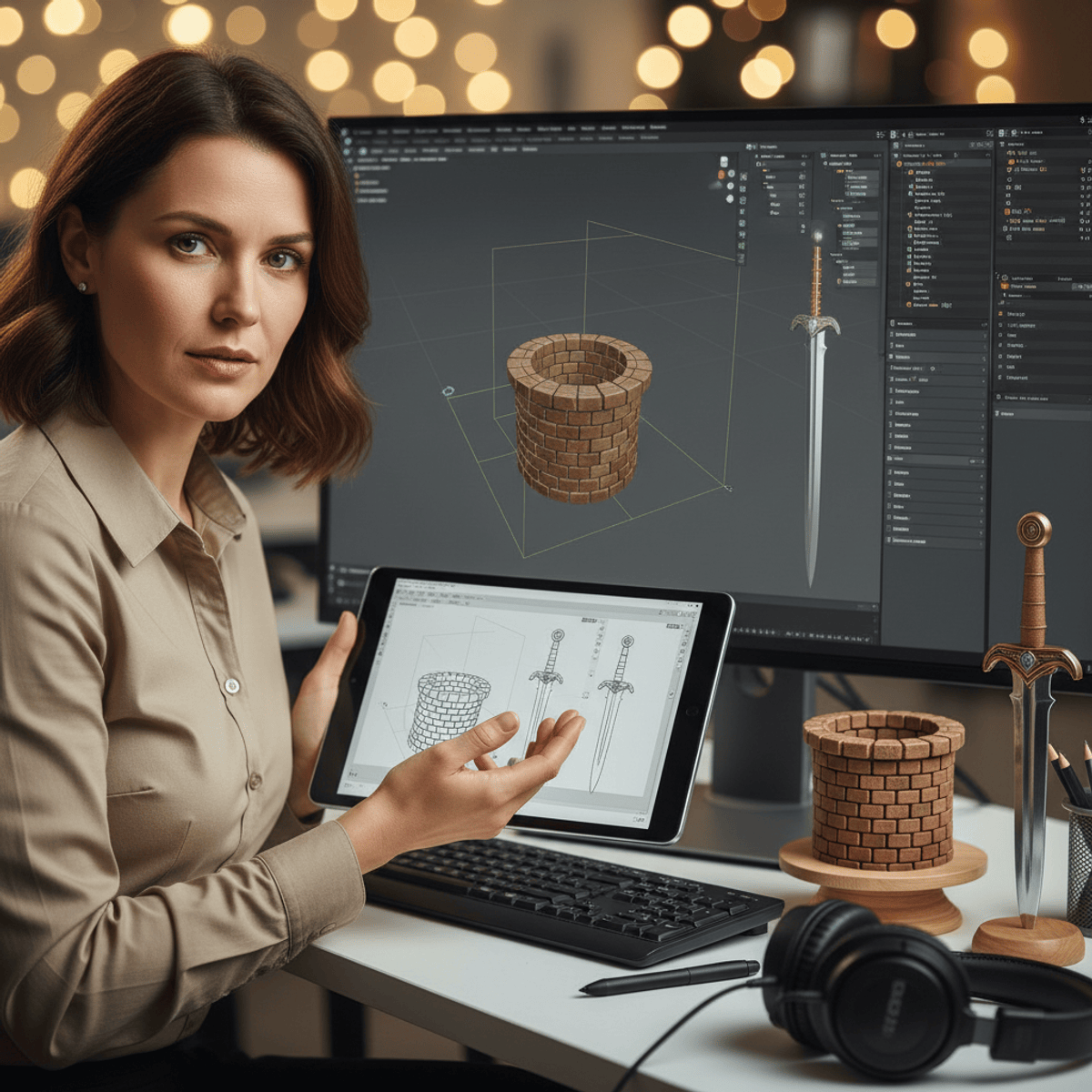

In this video we take a deeper dive into creating videos with StableDiffusion, comparing RunwayML Gen 1 with Automatic1111.

First we will create a short animation in Blender, then feed it first into RunwayML Gen1 and later into Automatic1111, Batch img2img / ControlNet and explain the whole workflow of creating a video animation in each system. We also will show, how to reduce flickering and temporal incoherences in StableDiffusion videos considerably.

Finally, we will compare the results and talk about the pros and cons of each system, as well as the requirements, support and give a final conclusion.

Chapters:

00:00 Introduction

01:16 Preparing some simple video footage in Blender

05:00 Creating a video animation with RunwayML Gen 1, using our video footage as an input

09:54 Creating a video animation with Automatic1111/batch img2img/controlnet , using the same footage

13:40 Improving the temporal coherence in Automatic1111 videos

14:42 Comparison, pros and cons of RunwayML Gen 1 vs Automatic1111, final conclusion

Also visit our music channel to watch some great music-videos, created with StableDiffusion, Unreal Engine and Blender:

https://www.youtube.com/@-vero-

Useful Links:

---------------------

RunwayML website:

https://runwayml.com

Local installation guide for Automatic1111 on a Windows-PC:

https://stable-diffusion-art.com/install-windows/

and on a Mac with Apple Silicon:

https://stable-diffusion-art.com/install-mac/

Running StableDiffusion Automatic1111 on Google Colab:

https://stable-diffusion-art.com/automatic1111-colab/

Installing the ControlNet extension for Automatic1111:

https://github.com/Mikubill/sd-webui-controlnet

Link to the Mixamo site:

https://www.mixamo.com/

Link to Sketchfab for downloading free 3d models, that you can use in Blender:

https://sketchfab.com/

Download Blender:

https://www.blender.org/download/

Creating Mixamo animations in Blender:

https://youtu.be/eFjECaeY4Go

Using LoRA models in StableDiffusion Automatic1111:

ht

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: Image Generation Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

What makes an AI image workflow useful for real commercial output?

Dev.to AI

How to Write Better AI Image Prompts for Midjourney (With Examples That Actually Work)

Medium · ChatGPT

Image to Video AI: The Complete Workflow Playbook That Actually Produces Results

Medium · AI

Image Harvest v1.0.2: Internationalization, Free Pro Trial & Quality-of-Life Improvements

Dev.to · kyriewen

Chapters (6)

Introduction

1:16

Preparing some simple video footage in Blender

5:00

Creating a video animation with RunwayML Gen 1, using our video footage as an in

9:54

Creating a video animation with Automatic1111/batch img2img/controlnet , using t

13:40

Improving the temporal coherence in Automatic1111 videos

14:42

Comparison, pros and cons of RunwayML Gen 1 vs Automatic1111, final conclusion

🎓

Tutor Explanation

![Qwen 2.5 AI: Complete Beginner Tutorial [100% Free and OpenSource]](https://i.ytimg.com/vi/kdSSgMDk_QE/mqdefault.jpg)

DeepCamp AI

DeepCamp AI