Retrieval Augmented Generation (RAG) Explained: Embedding, Sentence BERT, Vector Database (HNSW)

Get your 5$ coupon for Gradient: https://gradient.1stcollab.com/umarjamilai

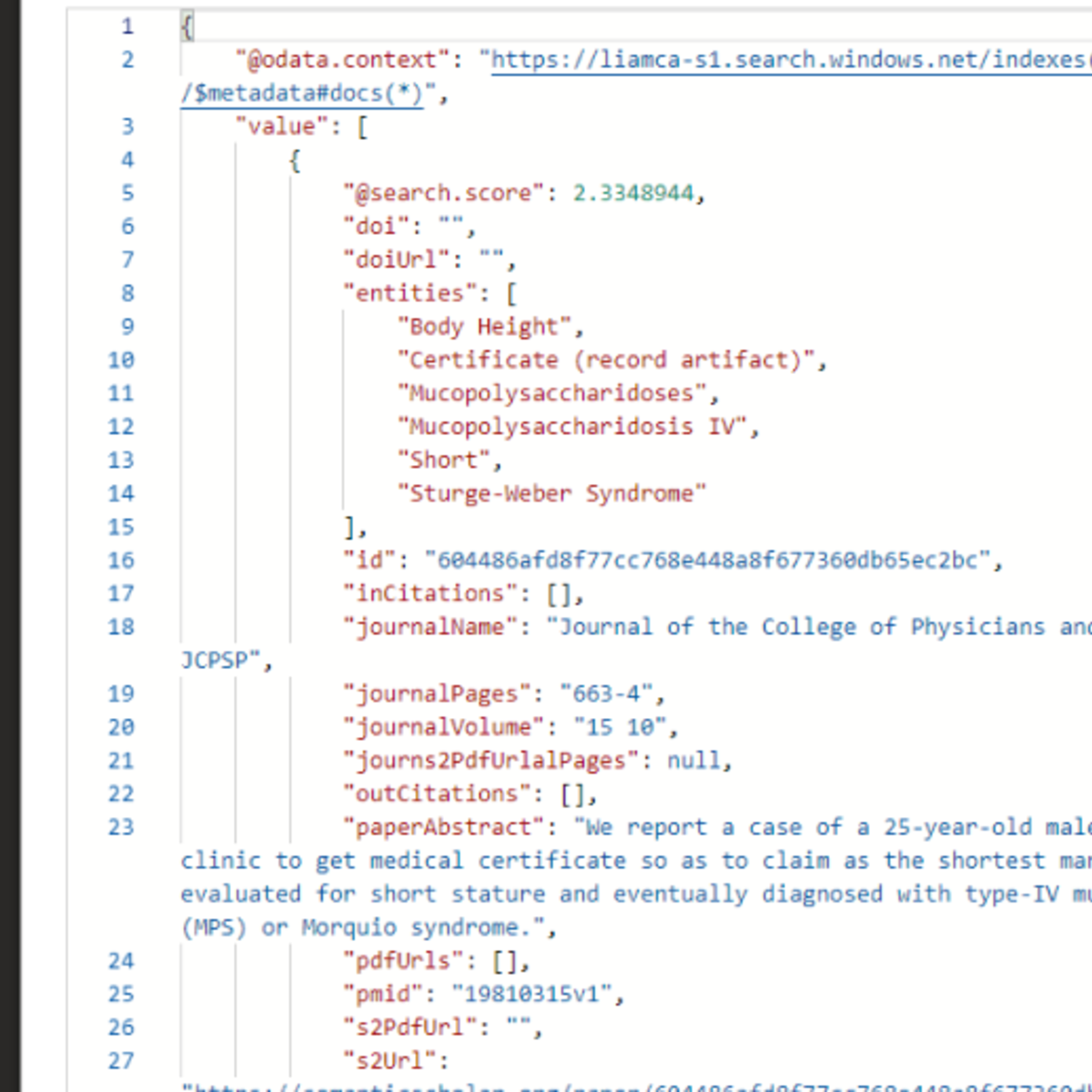

In this video we explore the entire Retrieval Augmented Generation pipeline. I will start by reviewing language models, their training and inference, and then explore the main ingredient of a RAG pipeline: embedding vectors. We will see what are embedding vectors, how they are computed, and how we can compute embedding vectors for sentences. We will also explore what is a vector database, while also exploring the popular HNSW (Hierarchical Navigable Small Worlds) algorithm used by vector databases to find embedding vectors given a query.

Download the PDF slides: https://github.com/hkproj/retrieval-augmented-generation-notes

Sentence BERT paper: https://arxiv.org/pdf/1908.10084.pdf

Chapters

00:00 - Introduction

02:22 - Language Models

04:33 - Fine-Tuning

06:04 - Prompt Engineering (Few-Shot)

07:24 - Prompt Engineering (QA)

10:15 - RAG pipeline (introduction)

13:38 - Embedding Vectors

19:41 - Sentence Embedding

23:17 - Sentence BERT

28:10 - RAG pipeline (review)

29:50 - RAG with Gradient

31:38 - Vector Database

33:11 - K-NN (Naive)

35:16 - Hierarchical Navigable Small Worlds (Introduction)

35:54 - Six Degrees of Separation

39:35 - Navigable Small Worlds

43:08 - Skip-List

45:23 - Hierarchical Navigable Small Worlds

47:27 - RAG pipeline (review)

48:22 - Closing

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: RAG Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

RAG Evaluation with RAGAS: Measuring Faithfulness, Context Precision, and Recall in Production

Dev.to · Anna Danilec

Chunking for RAG: stop tuning the wrong knob

Dev.to · saurabh naik

Your RAG Pipeline Isn’t Broken. Your Chunks Are.

Medium · LLM

Your RAG Pipeline Isn’t Broken. Your Chunks Are.

Medium · RAG

Chapters (20)

Introduction

2:22

Language Models

4:33

Fine-Tuning

6:04

Prompt Engineering (Few-Shot)

7:24

Prompt Engineering (QA)

10:15

RAG pipeline (introduction)

13:38

Embedding Vectors

19:41

Sentence Embedding

23:17

Sentence BERT

28:10

RAG pipeline (review)

29:50

RAG with Gradient

31:38

Vector Database

33:11

K-NN (Naive)

35:16

Hierarchical Navigable Small Worlds (Introduction)

35:54

Six Degrees of Separation

39:35

Navigable Small Worlds

43:08

Skip-List

45:23

Hierarchical Navigable Small Worlds

47:27

RAG pipeline (review)

48:22

Closing

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI