Master ANN Search

Master ANN Search is an intermediate-level course designed for machine learning engineers and AI practitioners tasked with building high-speed, large-scale vector search systems. As datasets grow into the millions, traditional brute-force search methods become impossibly slow. This course provides the practical skills to overcome this challenge using Approximate Nearest Neighbor (ANN) algorithms.

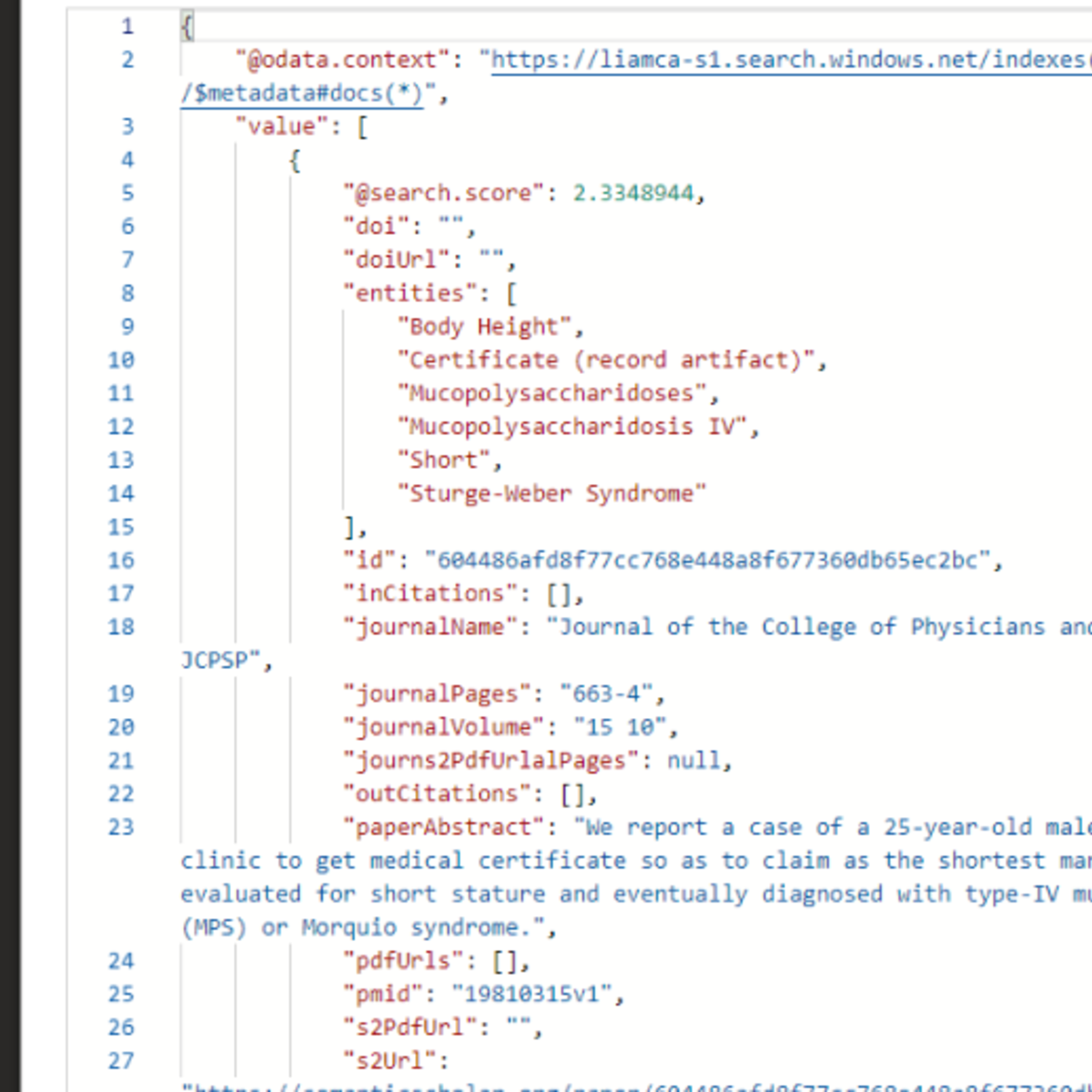

You will get hands-on experience implementing industry-standard libraries like FAISS and Annoy to build and prototype powerful vector indexes. Through a series of expert-led videos, readings, and ungraded labs, you will move beyond basic implementation to master the art of performance evaluation. You will learn to measure and analyze the critical trade-off between retrieval accuracy (recall) and speed (latency), benchmarking your solutions against brute-force search to quantify their effectiveness. The course culminates in a final project where you will optimize an index for a 100k vector dataset, mirroring the real-world job task of balancing performance for applications like Retrieval-Augmented Generation (RAG) or recommendation engines. By the end, you’ll be equipped to not just use ANN, but to strategically deploy it.

You will need to be familiar with Python programming, data structures, and basic machine learning concepts. Familiarity with vectors is a plus.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: RAG Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

The Future of RAG: Dead, Evolving… or Becoming the Brain of AI?

Medium · Machine Learning

Smart Routing, Transfer Family Ingestion, and Voice Chat — Permission-Aware RAG v4.2

Dev.to · Yoshiki Fujiwara(藤原 善基)@AWS Community Builder

Most Companies Doing GenAI Are Really Just Doing RAG: RAGOps Explained for analysts

Medium · RAG

RAG - Sliding Window, Token Based Chunking and PDF Chunking Packages

Dev.to AI

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI