[Graph Neural Nets] Breaking Symmetry Bottlenecks: How Projector-Based Readouts Supercharge GNNs.

Skills:

Advanced RAG90%

Think about the last time you used a Graph Neural Network. You probably spent weeks fine-tuning the message-passing layers, the depth, and the attention heads. But when it came time to actually get an answer from the graph—the 'readout'—you likely just summed everything up or took an average. It’s a standard move, right?

Well, it turns out that one simple step might be the very thing holding your model back. Today, we’re exploring a deep dive into the hidden math of graph learning: Breaking Symmetry Bottlenecks in GNN Readouts.

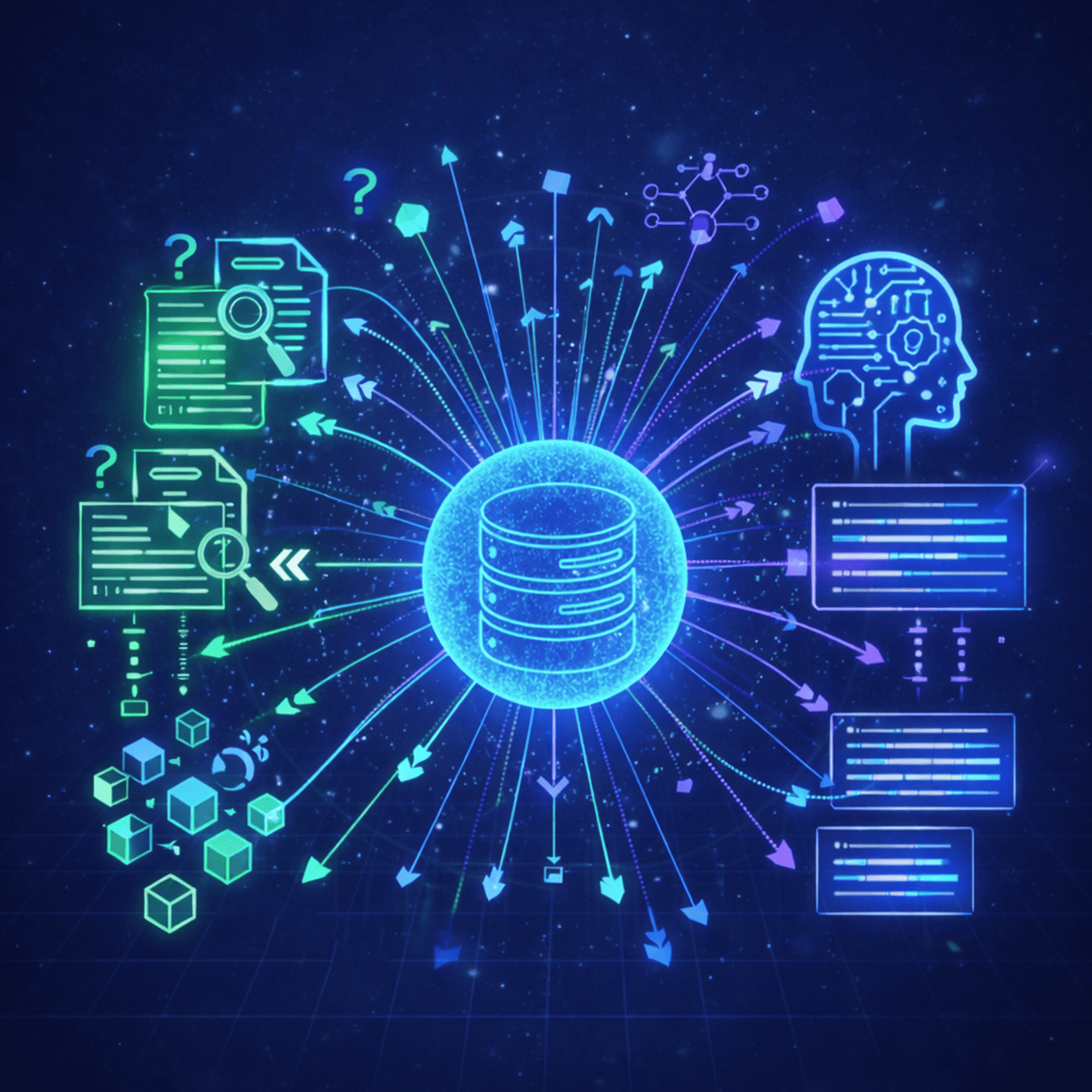

Based on groundbreaking research into representation theory, we’re looking at why standard linear pooling acts as a 'symmetry bottleneck.' We’ll discuss how common aggregation methods like sum and mean pooling act as a Reynolds operator, effectively throwing away crucial structural information—no matter how powerful your encoder is. We’re breaking down the fix: projector-based invariant readouts that use the graph’s own symmetry groups to see what global pooling misses. If you’ve ever wondered why your GNN can't tell the difference between two complex molecules, the answer isn't in the layers—it’s in the readout.

What’s on the Roadmap:

The Reynolds Operator Trap: Why your current readout might be projecting your hard-earned embeddings into a 'fixed subspace' and deleting data.

Symmetry Channels: How equivariant projectors decompose graph data to capture structural details that are normally invisible.

Beating the WL-Test: How a simple change in readout design allows GNNs to distinguish 'WL-hard' graph pairs that were previously identical to the model.

The Under-Appreciated Variable: Why readout design is the new frontier for performance in molecular and structural benchmarks.

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: Advanced RAG

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

Why StarRocks Is Better Than Elasticsearch for RAG and AI-Powered Vector Search Analytics

Medium · LLM

Production RAG: Shipping a RAG System Into an Enterprise Product

Medium · RAG

HyDE: Search With the Answer You Wish You Had

Medium · RAG

Hierarchical Indices: Find the Section First, Then Find the Sentence

Medium · RAG

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI