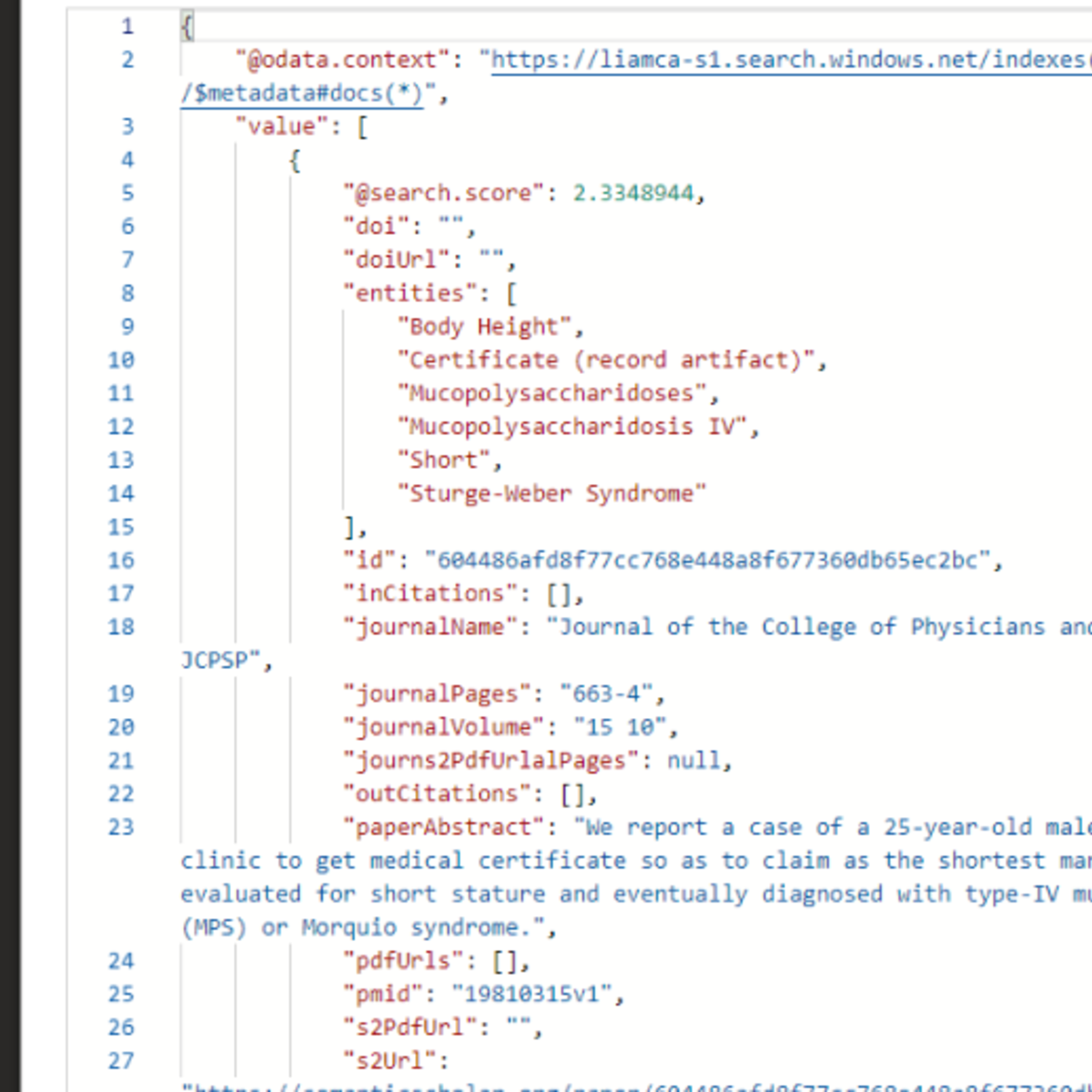

Demo: Private RAG with local Mistral 7b LLM and Weaviate on K8s

Live demo that shows how to deploy an end-to-end Retrieval Augmented Generation stack including the LLM and Embedding Server on top of any K8s cluster.

Lingo as the model proxy and autoscaler: https://github.com/substratusai/lingo

Verba as the RAG application: https://github.com/weaviate/Verba

Weaviate as the Vector DB: https://github.com/weaviate/weaviate

Mistral-7B-Instruct-v2 as the LLM

STAPI with MiniLM-L6-v2 as the embedding model: https://github.com/substratusai/stapi

Blog post with copy pasteable steps: https://www.substratus.ai/blog/lingo-weaviate-private-rag

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: RAG Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

How to Evaluate RAG Applications

Medium · LLM

RAG Chunking Is Not About Length — It Is About Preserving Meaning

Medium · AI

The Future of RAG: Dead, Evolving… or Becoming the Brain of AI?

Medium · Machine Learning

Smart Routing, Transfer Family Ingestion, and Voice Chat — Permission-Aware RAG v4.2

Dev.to · Yoshiki Fujiwara(藤原 善基)@AWS Community Builder

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI