Automate, Optimize, and Benchmark Data Pipelines

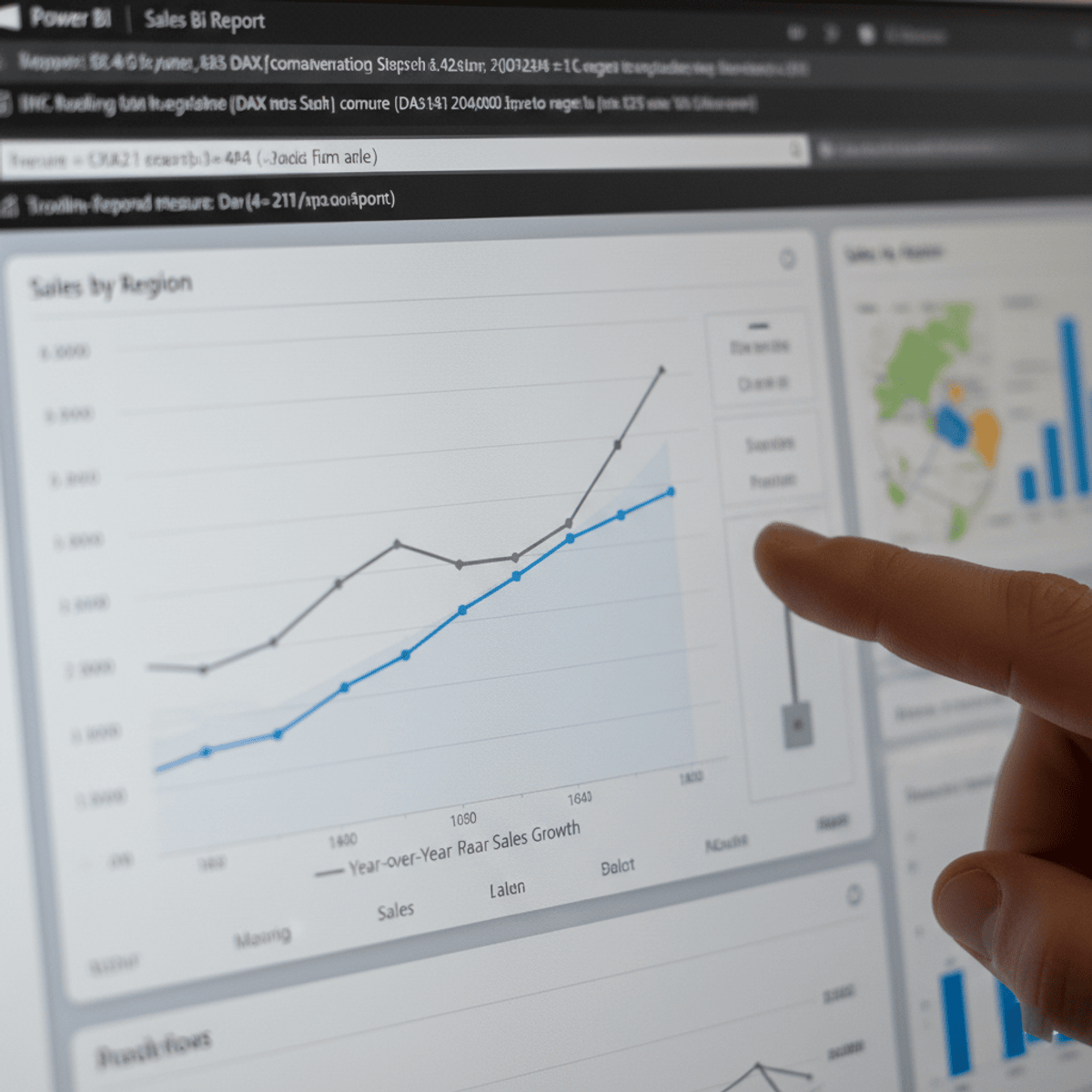

Did you know that two pipelines performing the same task can differ in run time by over 10x depending on design choices? Benchmarking and automation are essential for building fast, scalable, and cost-efficient data systems.

This Short Course was created to help data engineers and pipeline architects optimize data processing systems through performance benchmarking and automation scripting to enhance efficiency and scalability in enterprise environments.

By completing this course, you will be able to compare competing pipeline designs using run-time metrics, justify the most efficient approach, and automate the creation of transformation models using configuration-driven scripts—skills that help you build smarter, faster, and more reliable data pipelines.

By the end of this course, you will be able to:

Evaluate competing pipeline designs by comparing run-time statistics to justify the faster option.

Create an automated script to generate data transformation models from configuration files.

This course is unique because it blends performance engineering with automation, giving you practical experience in benchmarking real pipelines and generating transformation workflows programmatically to support large-scale data operations.

To be successful in this project, you should have:

SQL experience

Data transformation knowledge

Basic scripting skills

Familiarity with pipeline architecture

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: Data Literacy

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

SQL Joins for Placement Interviews: The Complete Guide Every BTech Student Needs

Medium · Programming

SQL DML Explained — INSERT, UPDATE, and DELETE with Real Examples

Medium · Programming

Simple Linear Regression : What! Can your Second term Grades can predict your Final term Grades?

Medium · Data Science

Aggregate Functions in SQL for Data Science Interviews

Medium · Data Science

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI