AI Tooling Capstone: Serverless Multi-Model Systems

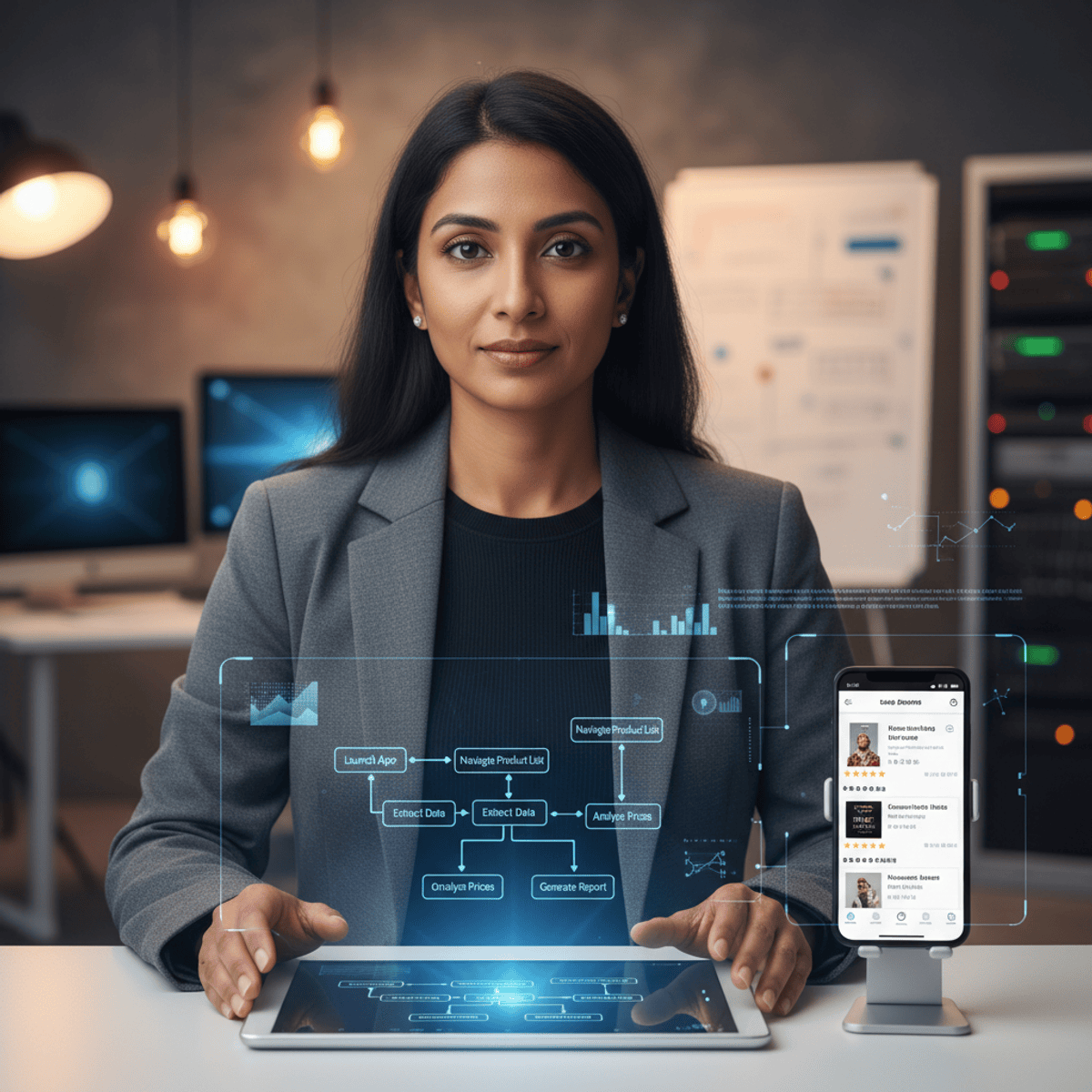

Build and deploy a production serverless multi-model Artificial Intelligence (AI) system on Amazon Web Services (AWS) that integrates Amazon Bedrock and Ollama for cloud and local Large Language Model (LLM) execution. This capstone course, the final course in the Applied AI Engineering specialization, synthesizes 19 courses of prior learning into a comprehensive engineering project. You will implement Rust-based LLM applications using the Cargo Lambda toolchain for serverless deployment on AWS Lambda, design Yet Another Markup Language (YAML)-driven prompt engineering workflows for structured configuration management, and build multi-model flow orchestration that routes requests to appropriate models based on task requirements. The course begins with multi-model architecture fundamentals covering the evolving AI model ecosystem, model selection criteria for production workloads, and multi-provider integration patterns that enable fallback and cost optimization. You then advance to serverless production deployment, implementing an Amazon Bedrock router for dynamic model selection and deploying Rust serverless functions with Cargo Lambda that offer cold start and memory advantages for AI workloads. The final capstone challenge requires you to integrate multi-model orchestration, YAML prompt configuration, and serverless deployment into a complete production system evaluated against performance, cost, and reliability standards.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: AI Workflow Automation

View skill →Related AI Lessons

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI