Future of AI

AI Safety & Ethics

Alignment, interpretability, AI risks, and building safe AI systems

Skills in this topic

3 skills — Sign in to track your progress

Showing 616 reads from curated sources

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Auditing Claude Code: what I found and how I contained it

What Claude Code captures from your system (and how to contain it) In early March 2026, I noticed Claude Code behaving oddly with my shell environment. Sandbox

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Large Language Letters 04/12/2026

Automated draft from LLL Ajeya Cotra: AI Safety Window Measured in Months, Not Years The "Crunch Time" Thesis Gains Urgency Amidst AI Progress On The Cognitive

Dev.to · Zafer Dace

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

The Machine Is Real: An AI Escaped Its Sandbox and Sent an Email

An Anthropic researcher was eating a sandwich in a park when he got an email from an AI that wasn't...

Medium · Cybersecurity

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Why CIOs and CISOs Block AI and What AI Vendors Miss

AI adoption is accelerating across the enterprise. Continue reading on Medium »

Hacker News

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Artificial Intelligence and Human Legal Reasoning

Article URL: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=6525800 Comments URL: https://news.ycombinator.com/item?id=47742652 Points: 1 # Comments: 0

Medium · AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

AI Psychosis: The Danger of a World That Never Disagrees With You

For decades, we were warned that the danger of Artificial Intelligence was that it would eventually become too smart and decide it didn’t… Continue reading on C

Medium · AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

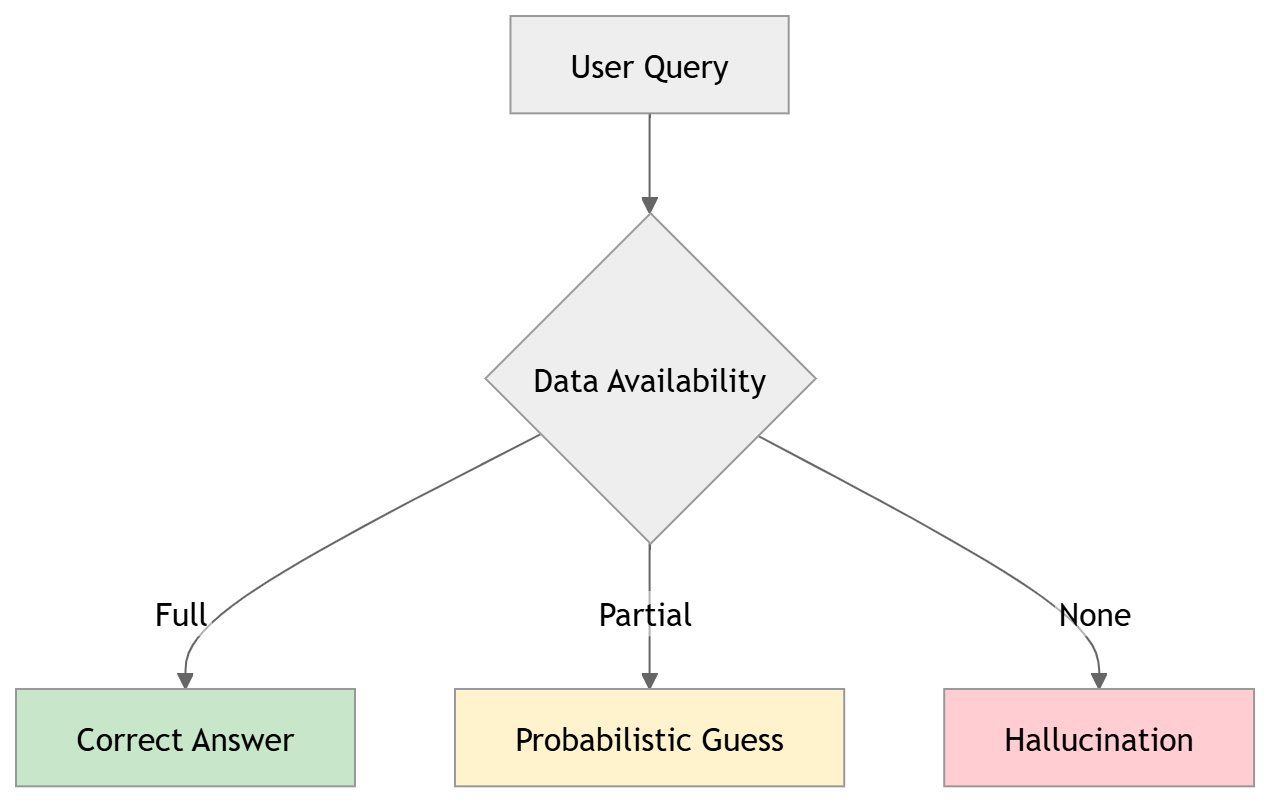

Why does AI lie?” (Hallucination Testing)

If you use AI, you’ve probably heard this statement before: “I don’t trust AI results because it makes things up. (hallucination)” Continue reading on Medium »

Medium · AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

An AI Model Just Found a 27-Year-Old Zero-Day in OpenBSD

Anthropic’s Claude Mythos — still unreleased, currently gated to about 50 partner orgs through Project Glasswing — autonomously discovered… Continue reading on

Medium · Data Science

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Anthropic’s Claude Mythos Cybersecurity Circus

A critical review of Anthropic’s Claude Mythos cybersecurity capabilities and risks with the assistance of Gemini 3.1. Continue reading on Medium »

Medium · AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

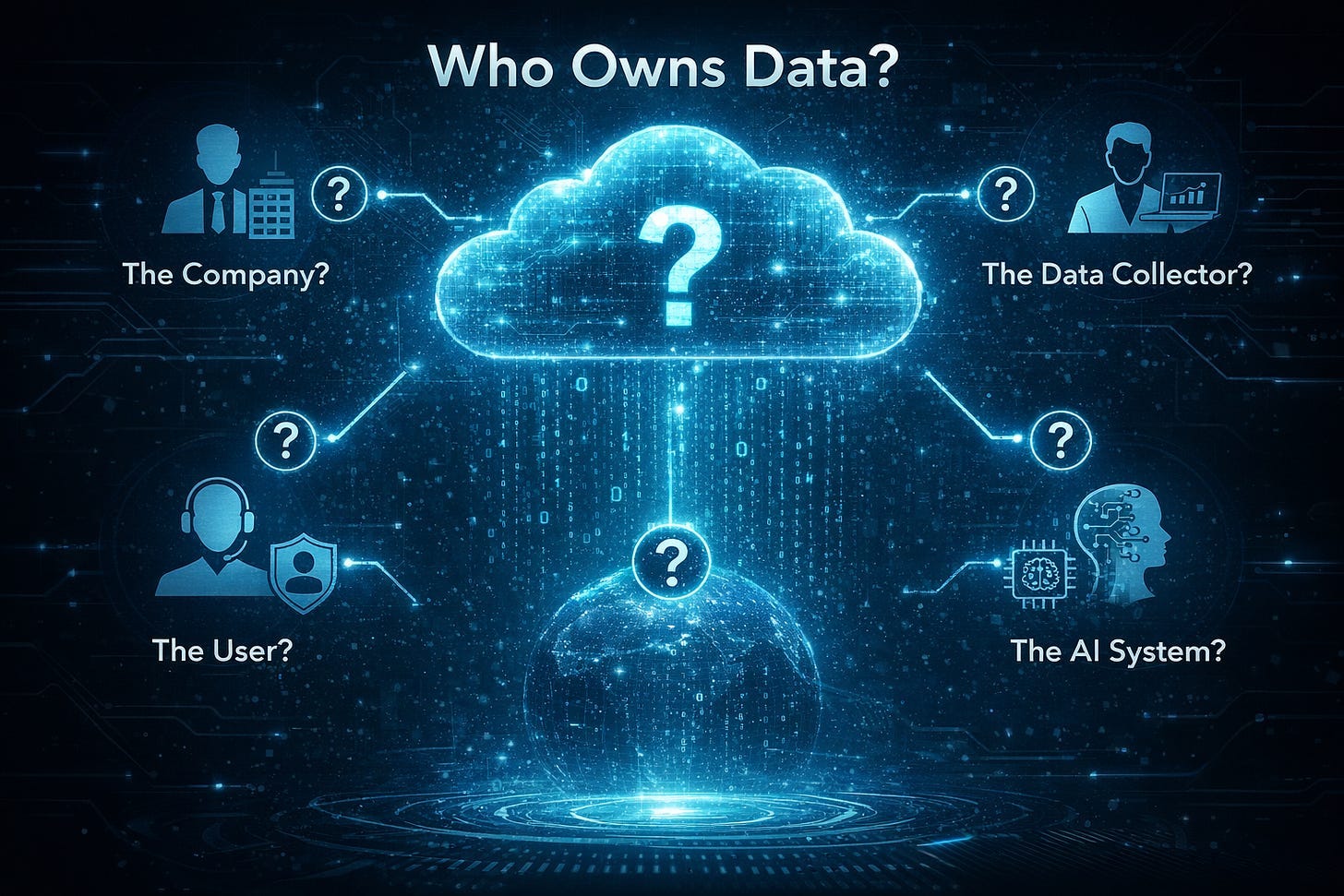

AI & Ownership: Who owns what?

In my earlier articles on understanding AI, I explored how these systems are changing the way we think, create, and work, and what happens… Continue reading on

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Deepfake Fraud Tripled to $1.1B. Your Evidence Workflow Didn't.

a shift in the digital evidence landscape has arrived, and for developers in the computer vision and biometrics space, the implications are profound. The news t

Medium · AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

In Pursuit of a Perfect CIRCLE

What if evaluating AI is really about how humans interact with uncertainty? Continue reading on Medium »

Medium · AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Das Ende des Responsible-AI-Theaters und Nicole Junkermanns Sicht auf KI-Governance

By Nicole Junkermann: originally published in Klamm. Continue reading on Medium »

Medium · Machine Learning

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

# Who Teaches Your AI Right from Wrong? The Constitutional Problem of RLHF

*One evaluator’s values. Billions of conversations. No oversight.* Continue reading on Medium »

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

The AI landscape is experiencing unprecedented growth and transformation. This post delves into the key developments shaping the future of artificial intelligen

Medium · AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

The Question That Actually Matters When AI Scares You

The fear of being replaced is real. But the question that actually matters is different — and the answer changes everything. Continue reading on Midform »

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

When AI Recombines Partial Government Data: Why Structured Records Become Necessary

When fragmented public information is reassembled without context, meaning, authority, and accuracy begin to drift

Medium · Machine Learning

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Anthropic’s Project Glasswing: Securing Critical Software in the AI Era

One of the world’s leading AI labs has deliberately withheld its most powerful model not to slow progress, but to give defenders a… Continue reading on Medium »

Medium · Data Science

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Anthropic’s Project Glasswing: Securing Critical Software in the AI Era

One of the world’s leading AI labs has deliberately withheld its most powerful model not to slow progress, but to give defenders a… Continue reading on Medium »

Medium · Programming

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Anthropic’s Project Glasswing: Securing Critical Software in the AI Era

One of the world’s leading AI labs has deliberately withheld its most powerful model not to slow progress, but to give defenders a… Continue reading on Medium »

The Algorithmic Bridge

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

AI Will Be Met With Violence, and Nothing Good Will Come of It

It has started

Hackernoon

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Is Mythos Really The Internet's Greatest Cybersecurity Risk? Or Just an Anthropic Product Launch?

Anthropic built Claude Mythos, a model that found thousands of zero-days in every major OS and browser, broke out of a sandbox unprompted, and showed signs of c

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

OpenAI Takes a Step to Protect Children from AI-Generated Exploitation

<img src="https://media2.dev.to/dynamic/image/width=800%2Cheight=%2Cfit=scale-down%2Cgravity=auto%2Cformat=auto/https%3A%2F%2Fdev-to-uploads.s3.amazonaws.com%2F

TechCrunch AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

OpenAI releases a new safety blueprint to address the rise in child sexual exploitation

OpenAI's new Child Safety Blueprint aims to tackle the alarming rise in child sexual exploitation linked to advancements in AI.

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Newly Discovered Skills This Week — 2026-04-08

52,702 skills indexed, 2105 audited. Found 172 malicious, 1012 suspicious. Read full report Audit: clawsec.cc Search: clawsearch.cc Pre-install check:

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Skill Category Distribution — 2026-04-08

52,702 skills indexed, 2105 audited. Found 172 malicious, 1012 suspicious. Read full report Audit: clawsec.cc Search: clawsearch.cc Pre-install check:

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Rising Authors — Clean Track Records — 2026-04-08

52,702 skills indexed, 2105 audited. Found 172 malicious, 1012 suspicious. Read full report Audit: clawsec.cc Search: clawsearch.cc Pre-install check:

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Suspicious Skills — What to Watch — 2026-04-08

52,702 skills indexed, 2105 audited. Found 172 malicious, 1012 suspicious. Read full report Audit: clawsec.cc Search: clawsearch.cc Pre-install

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Safest Skills — Recommended Picks — 2026-04-08

52,702 skills indexed, 2105 audited. Found 172 malicious, 1012 suspicious. Read full report Audit: clawsec.cc Search: clawsearch.cc Pre-install ch

Dev.to AI

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Malicious Skills Exposed — Threat Breakdown — 2026-04-08

52,702 skills indexed, 2105 audited. Found 172 malicious, 1012 suspicious. Read full report Audit: clawsec.cc Search: clawsearch.cc Pre-install che

Hackernoon

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

I Spent 48 Hours Responding to the LiteLLM Supply Chain Attack. Here Is Everything I Know

LiteLLM versions 1.82.7 and 1. 82.8 were backdoored with credential-stealing malware through a stolen PyPI token. Full technical breakdown, incident response pl

Stratechery

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Anthropic’s New Model, The Mythos Wolf, Glasswing and Alignment

Anthropic says its new model is too dangerous to release; there are reasons to be skeptical, but to the extent Anthropic is right, that raises even deeper conce

OpenAI News

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Introducing the Child Safety Blueprint

Discover OpenAI’s Child Safety Blueprint—a roadmap for building AI responsibly with safeguards, age-appropriate design, and collaboration to protect and empower

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Reciprocal Trust and Distrust in Artificial Intelligence Systems: The Hard Problem of Regulation

arXiv:2604.05826v1 Announce Type: new Abstract: Policy makers, scientists, and the public are increasingly confronted with thorny questions about the regulation

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Synthetic Trust Attacks: Modeling How Generative AI Manipulates Human Decisions in Social Engineering Fraud

arXiv:2604.04951v1 Announce Type: cross Abstract: Imagine receiving a video call from your CFO, surrounded by colleagues, asking you to urgently authorise a con

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Robust AI Security and Alignment: A Sisyphean Endeavor?

arXiv:2512.10100v2 Announce Type: replace Abstract: This manuscript establishes information-theoretic limitations for robustness of AI security and alignment by

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Safety, Security, and Cognitive Risks in World Models

arXiv:2604.01346v2 Announce Type: replace-cross Abstract: World models - learned internal simulators of environment dynamics - are rapidly becoming foundational

Forbes Innovation

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Gene Regulation May Control How Long We Live

Cross-species research shows that RNA splicing patterns, not just gene activity, track maximum lifespan in mammals, revealing a new axis of longevity control.

Hackernoon

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

The Deepfake Paradox: Why Blockchain Holds the Key to Digital Trust

Deepfakes are rapidly destroying trust in digital content, making detection an unwinnable arms race. Instead of trying to identify fake media, blockchain offers

The Verge

🛡️ AI Safety & Ethics

⚡ AI Lesson

1mo ago

Gemini is making it faster for distressed users to reach mental health resources

Google says it has updated Gemini to better direct users to get mental health resources during moments of crisis. The change comes as the tech giant faces a wro

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Incompleteness of AI Safety Verification via Kolmogorov Complexity

arXiv:2604.04876v1 Announce Type: new Abstract: Ensuring that artificial intelligence (AI) systems satisfy formal safety and policy constraints is a central cha

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Is your AI Model Accurate Enough? The Difficult Choices Behind Rigorous AI Development and the EU AI Act

arXiv:2604.03254v1 Announce Type: cross Abstract: Technical and legal debates frequently suggest that "accuracy" is an objective, measurable, and purely technic

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

SafeScreen: A Safety-First Screening Framework for Personalized Video Retrieval for Vulnerable Users

arXiv:2604.03264v1 Announce Type: cross Abstract: Open-domain video platforms offer rich, personalized content that could support health, caregiving, and educat

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Safety-Aligned 3D Object Detection: Single-Vehicle, Cooperative, and End-to-End Perspectives

arXiv:2604.03325v1 Announce Type: cross Abstract: Perception plays a central role in connected and autonomous vehicles (CAVs), underpinning not only conventiona

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Toward a Sustainable Software Architecture Community: Evaluating ICSA's Environmental Impact

arXiv:2604.04096v1 Announce Type: cross Abstract: Generative AI (GenAI) tools are increasingly integrated into software architecture research, yet the environme

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Cyber-Physical Systems Security: A Comprehensive Review of Anomaly Detection Techniques

arXiv:2502.13256v2 Announce Type: replace-cross Abstract: In an increasingly interconnected world, Cyber-Physical Systems (CPS) are essential to critical indust

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

SoSBench: Benchmarking Safety Alignment on Six Scientific Domains

arXiv:2505.21605v3 Announce Type: replace-cross Abstract: Large language models (LLMs) exhibit advancing capabilities in complex tasks, such as reasoning and gr

ArXiv cs.AI

🛡️ AI Safety & Ethics

📄 Paper

⚡ AI Lesson

1mo ago

Vid-Freeze: Protecting Images from Malicious Image-to-Video Generation via Temporal Freezing

arXiv:2509.23279v2 Announce Type: replace-cross Abstract: The rapid progress of image-to-video (I2V) generation models has introduced significant risks by enabl

DeepCamp AI

DeepCamp AI