Why RAG Still Fails Sometimes | And How to Fix It (Explained Visually)

Retrieval-Augmented Generation (RAG) helps AI reduce hallucinations by searching documents before answering.

But even well-built RAG systems can still fail.

In this video, I explain why RAG still fails sometimes and how to fix it, using simple visuals diagrams with no math or heavy technical language.

You’ll learn why RAG failures are usually pipeline problems, not model problems, and how small design mistakes can lead to confident but incorrect answers.

In this video, you’ll learn:

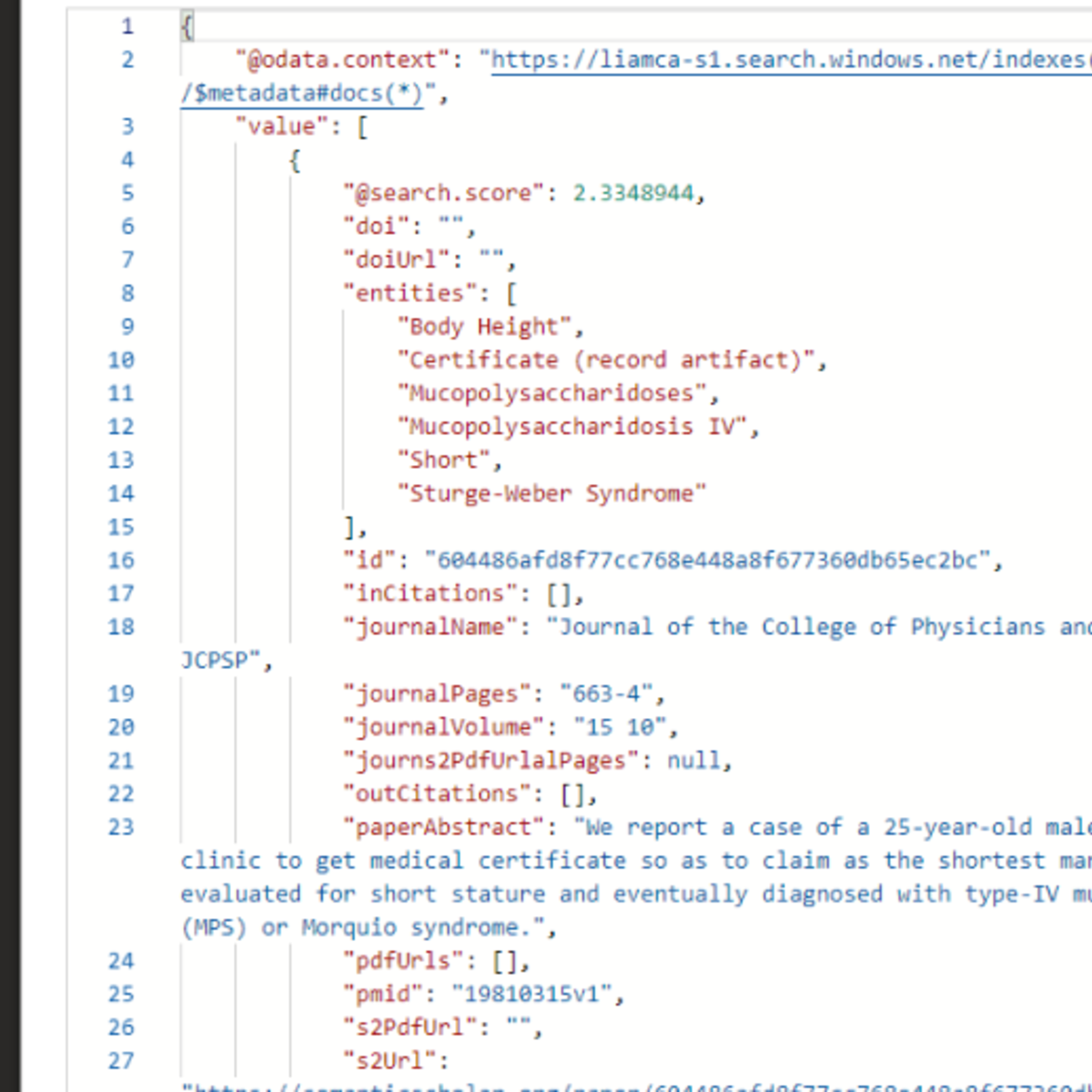

- Why bad retrieval leads to bad answers

- What happens when the right information is missing

- Why models sometimes ignore retrieved sources

- How weak citations break trust

- Practical ways to fix each failure mode

This explanation is ideal for anyone building or using RAG systems for research, education, or enterprise AI tools.

#RAG #AIExplained #LLMs #MachineLearning #AITrust

Like the video if it helped

Subscribe for more simple, visual AI explanations

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: RAG Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

The ABCs of reading medical research and review papers these days

Medium · LLM

#1 DevLog Meta-research: I Got Tired of Tab Chaos While Reading Research Papers.

Dev.to AI

How to Set Up a Karpathy-Style Wiki for Your Research Field

Medium · AI

The Non-Optimality of Scientific Knowledge: Path Dependence, Lock-In, and The Local Minimum Trap

ArXiv cs.AI

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI