Training, Evaluating, and Monitoring Machine Learning Models

Building machine learning models is only the first step. To create reliable ML systems, engineers must evaluate model performance, diagnose prediction errors, and monitor deployed models over time. In this course, you'll learn how to train, evaluate, and monitor machine learning models using practical engineering techniques.

You’ll begin by exploring model training strategies that improve convergence and performance. You’ll analyze training logs, loss curves, and class imbalance effects to understand how models learn and where they struggle.

Next, you’ll learn how to evaluate machine learning models using appropriate performance metrics. You’ll analyze confusion matrices and residual patterns to identify systematic prediction errors and assess the statistical significance of model improvements.

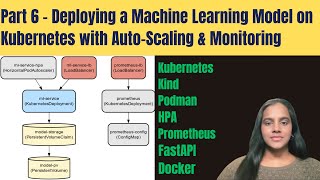

Finally, you’ll focus on monitoring machine learning models in production environments. You’ll apply validation techniques, analyze A/B testing results, and monitor model behavior over time to detect performance drift and trigger retraining workflows.

Through a hands-on project, you'll design a model evaluation and monitoring framework that helps ensure machine learning systems remain accurate and reliable after deployment.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: ML Pipelines

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

The Threshold Is a Business Decision, Not a Statistical One

Medium · Machine Learning

Can Your Stress Level Predict How Much You Sleep?

Medium · Machine Learning

Role of Model Architecture In Inference — Inference Series

Medium · Machine Learning

Role of Model Architecture In Inference — Inference Series

Medium · Deep Learning

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI