RAG vs Fine Tuning || War between 2 AI greats #RAG #finetuning #llm

In modern AI systems, two powerful approaches often get compared: RAG (Retrieval-Augmented Generation) and Fine-Tuning.

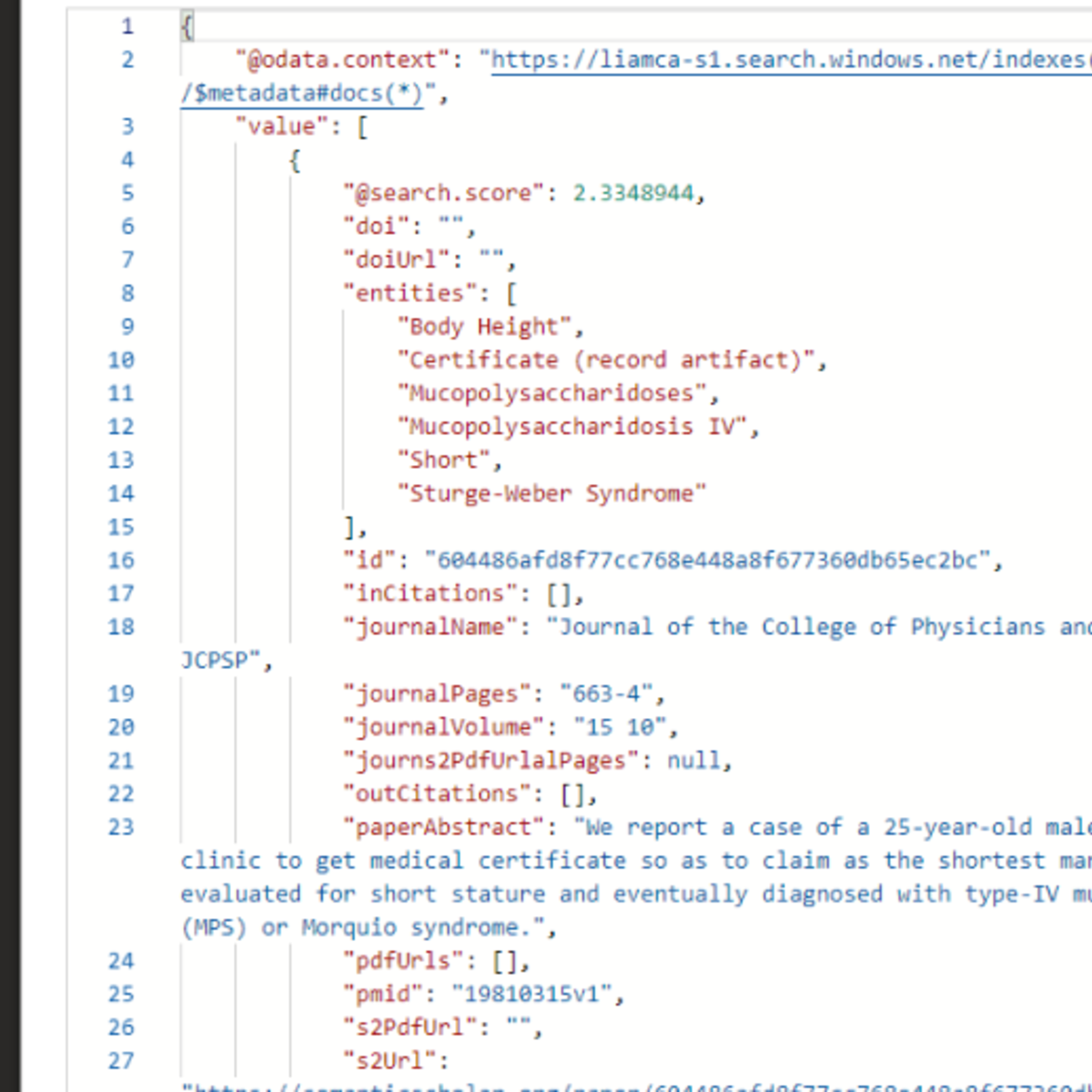

RAG improves responses by retrieving relevant information from external sources like documents, databases, or vector stores at inference time. This allows models to use fresh, up-to-date knowledge without retraining the model.

Fine-Tuning, on the other hand, modifies the model’s internal weights using additional training data. This helps the model learn new behaviors, domain expertise, or task-specific skills directly inside the model.

Instead of choosing one over the other, many modern AI systems combine both approaches:

• Fine-Tuning → improves capability and specialization

• RAG → provides accurate, real-time contextual knowledge

Together, they form a hybrid architecture, which is widely used in real-world LLM applications like enterprise assistants, knowledge bots, and domain-specific AI systems.

Sometimes the best solution isn’t competition — it’s collaboration.

#AI #MachineLearning #RAG #FineTuning #LLM #ArtificialIntelligence #AIArchitecture

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: RAG Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

Thursday Thoughts: The Models We Can't Run

Dev.to · Rob

Big Tech firms are accelerating AI investments and integration, while regulators and companies focus on safety and responsible adoption.

Dev.to AI

35 ChatGPT Prompts for Recruiters (That Actually Work in 2026)

Dev.to · ClawGear

Stop Writing Like a Robot: The Prompt That Makes ChatGPT Sound Human

Medium · ChatGPT

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI