Production-Ready Multimodal ML Engineering

Production machine learning systems don't run on model accuracy alone — they depend on reliable data pipelines, optimized inference, and scalable cloud infrastructure. This course integrates the full stack of ML engineering skills needed to build and operate multimodal AI systems in the real world.

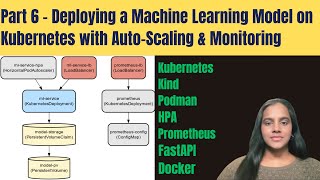

You will design a unified feature store schema for image, audio, and text data, then automate ingestion and validation using Apache Airflow and Great Expectations. You will apply test-driven development to PyTorch data loaders and training loops, optimize a model for real-time inference using TensorRT, and manage your codebase with GitFlow and CI/CD pipelines. Finally, you will containerize and deploy a GPU-accelerated service to Kubernetes, tuning autoscaling to meet production performance targets.

By the end, you will have a portfolio-ready project demonstrating end-to-end ML infrastructure skills — exactly what employers look for in ML Infrastructure Engineers, MLOps Engineers, and senior ML practitioners.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: ML Pipelines

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

I Built a Graph-Based SAS to PySpark Migration Accelerator. Here’s What I Learned.

Medium · LLM

Python Programming Course in Delhi

Medium · Python

Choosing the Right Architecture: A Software Engineer’s Field Guide to Neural Networks

Medium · Data Science

Chandra OCR 2: When Open Source Reads What Others Miss

Medium · Machine Learning

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI