Optimize and Manage Your ML Codebase

Skills:

ML Pipelines90%

Are you deploying ML models that need to respond in milliseconds, not seconds? In production environments, even the most accurate model becomes worthless if it can't meet real-time performance demands.

This Short Course was created to help ML and AI professionals accomplish systematic optimization of inference code and establish robust development workflows for production-ready ML systems.

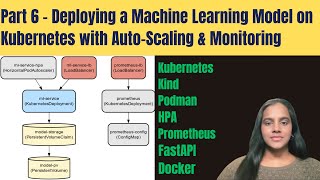

By completing this course, you'll be able to diagnose performance bottlenecks in your inference pipelines, apply advanced optimization techniques like quantization and pruning, and implement GitFlow or Trunk-Based Development strategies with automated CI/CD pipelines that you can deploy immediately in your workplace.

By the end of this course, you will be able to:

- Analyze inference code to optimize for real-time performance

- Evaluate Git branching strategies and CI/CD pipelines for codebase management

This course is unique because it bridges the gap between ML model development and production engineering, combining performance optimization techniques with software engineering best practices specifically tailored for ML workflows.

To be successful in this project, you should have experience with Python, PyTorch or TensorFlow, TensorRT, Git version control, and basic understanding of ML model deployment.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: ML Pipelines

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

I Found $50,400 in Wasted Business Spending Using One AI Prompt (Here’s the Prompt)

Medium · AI

I Found $50,400 in Wasted Business Spending Using One AI Prompt (Here’s the Prompt)

Medium · Startup

From Site Photos to Proposals: How AI Automates Takeoff for Trades

Dev.to AI

This new Claude skill saves you from bad contracts - and costs less than a lawyer

ZDNet

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI