OCR vs. Image Embeddings for PDF RAG: Which One is Better?

Skills:

RAG Basics90%

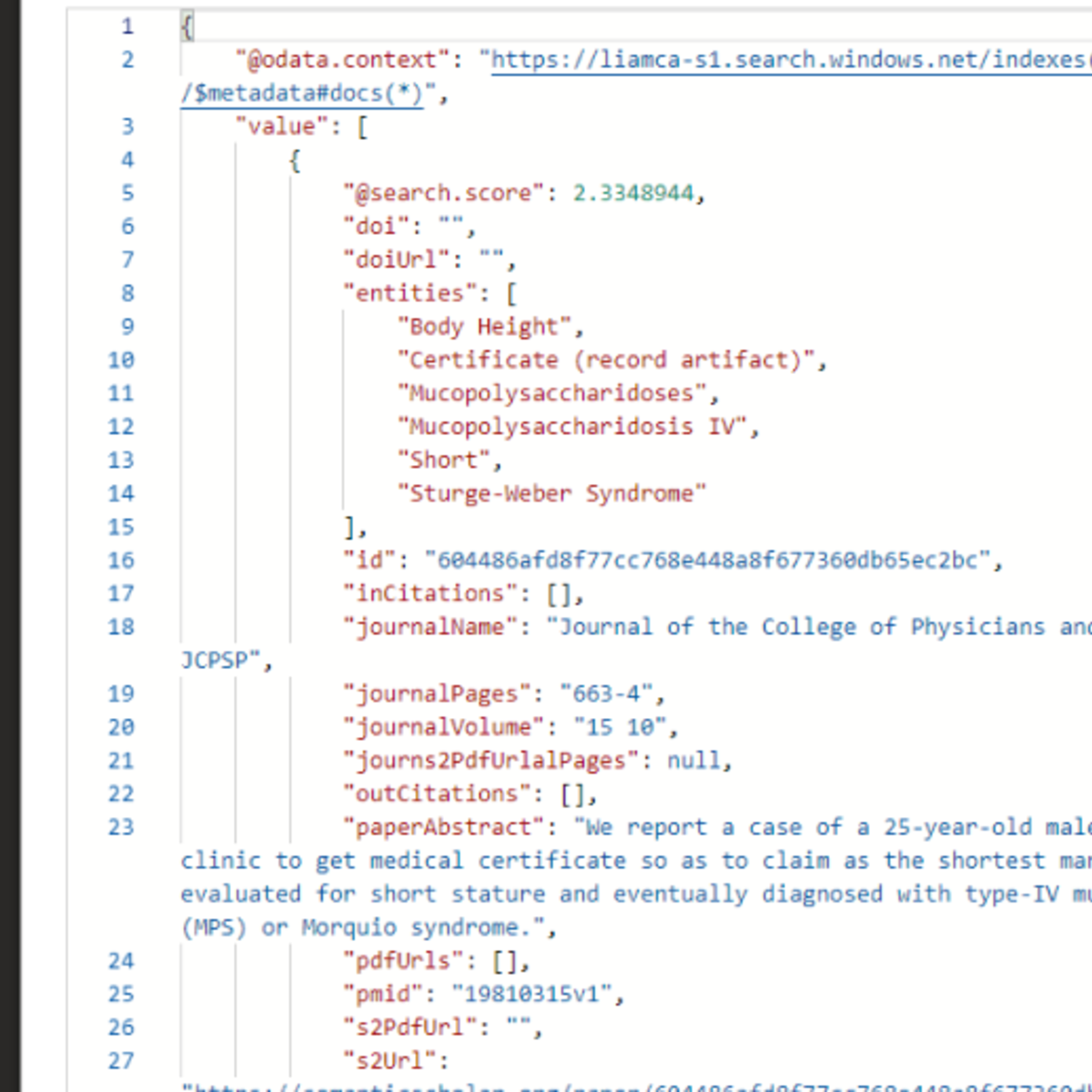

My colleagues at Weaviate released IRPAPERS, a benchmark comparing 𝗶𝗺𝗮𝗴𝗲-𝗯𝗮𝘀𝗲𝗱 and 𝘁𝗲𝘅𝘁-𝗯𝗮𝘀𝗲𝗱 retrieval over 3,230 pages from 166 scientific papers.

The setup: Take the same PDFs and process them two ways. For text, run OCR with GPT-4.1 and embed with Arctic 2.0 + BM25 hybrid search. For images, embed raw page images with ColModernVBERT multi-vector embeddings. Test both on 180 needle-in-the-haystack questions.

𝗧𝗵𝗲 𝗿𝗲𝘀𝘂𝗹𝘁𝘀:

Text edges out images at the top rank: 46% vs 43% Recall@1

But images match or exceed text at deeper recall: 93% vs 91% Recall@20

But text and image based methods actually fail on 𝘥𝘪𝘧𝘧𝘦𝘳𝘦𝘯𝘁 𝘲𝘶𝘦𝘳𝘪𝘦𝘴.

At Recall@1:

• 22 queries succeed with text but fail with images

• 18 queries succeed with images but fail with text

This complementarity is what makes 𝗠𝘂𝗹𝘁𝗶𝗺𝗼𝗱𝗮𝗹 𝗛𝘆𝗯𝗿𝗶𝗱 𝗦𝗲𝗮𝗿𝗰𝗵 work. By fusing scores from both text and image retrieval, they achieved:

• 49% Recall@1 (beating either modality alone)

• 81% Recall@5

• 95% Recall@20

00:00 - Intro

00:08 - Visual- vs Text-based methods

01:04 - The IRPapers dataset

01:59 - The 6 different search strategies

03:43 - The results

04:30 - The paper's most interesting finding...

05:11 - Conclusion

Watch on YouTube ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: RAG Basics

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

What is RAG and How Does It Work with Modern AI Systems?

Medium · AI

Limits of RAG and implications for self-hosted AI

Medium · RAG

Best Vector Databases for RAG (Free & Paid)

Medium · RAG

Retrieval-Augmented Generation: The Architecture That Made AI Actually Useful in Production

Medium · RAG

Chapters (7)

Intro

0:08

Visual- vs Text-based methods

1:04

The IRPapers dataset

1:59

The 6 different search strategies

3:43

The results

4:30

The paper's most interesting finding...

5:11

Conclusion

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI