Natural Language Processing with Attention Models

In Course 4 of the Natural Language Processing Specialization, you will:

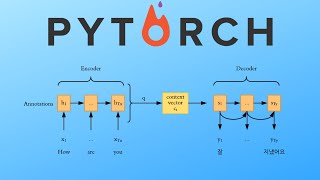

a) Translate complete English sentences into Portuguese using an encoder-decoder attention model,

b) Build a Transformer model to summarize text,

c) Use T5 and BERT models to perform question-answering.

By the end of this Specialization, you will have designed NLP applications that perform question-answering and sentiment analysis, and created tools to translate languages and summarize text!

Learners should have a working knowledge of machine learning, intermediate Python including experience with a deep learning framework (e.g., TensorFlow, Keras), as well as proficiency in calculus, linear algebra, and statistics. Please make sure that you’ve completed course 3 - Natural Language Processing with Sequence Models - before starting this course.

This Specialization is designed and taught by two experts in NLP, machine learning, and deep learning. Younes Bensouda Mourri is an Instructor of AI at Stanford University who also helped build the Deep Learning Specialization. Łukasz Kaiser is a Staff Research Scientist at Google Brain and the co-author of Tensorflow, the Tensor2Tensor and Trax libraries, and the Transformer paper.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: Sequence Models

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

The Day I Realized Most Developers Are Learning Python the Wrong Way

Medium · Python

Deterministic OCR in JavaScript: PaddleOCR for Node, Bun, Deno, and the Browser

Dev.to · Awal Ariansyah

From Spite to a Double Offer: Data Science Intern at Adobe Research

Medium · Machine Learning

Out of curiosity, how did a lot of you start?

Dev.to · libre-main

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI