Deploying and Debugging ML Microservices

Deploying machine learning models into production systems requires more than training a model—it requires reliable deployment, monitoring, and debugging practices. In this course, you'll learn how to deploy machine learning models as scalable services and maintain them within real software architectures.

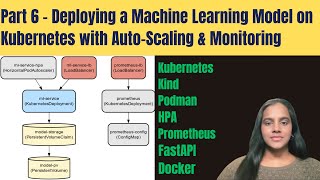

You’ll begin by learning how to package and deploy machine learning models using containerization and orchestration technologies. You’ll apply tools such as Docker and Kubernetes to manage application deployment and ensure that models run consistently across environments.

Next, you’ll design machine learning services that integrate into distributed system architectures. You’ll explore microservice design patterns, implement REST-based inference services, and analyze communication patterns that support scalable system behavior.

You’ll also learn how to monitor deployed ML systems using logs, metrics, and tracing tools that reveal performance issues and system bottlenecks.

Finally, you’ll apply debugging and testing techniques to diagnose and resolve problems in machine learning code and infrastructure. Through a hands-on project, you'll deploy and troubleshoot a machine learning microservice, ensuring it performs reliably under real-world conditions.

Watch on Coursera ↗

(saves to browser)

Sign in to unlock AI tutor explanation · ⚡30

More on: ML Pipelines

View skill →Related AI Lessons

⚡

⚡

⚡

⚡

ACID vs BASE Transactions

Dev.to · 丁久

Chapter 1. The Big Three of Circuits — R, L, C

Medium · Programming

Angular Interviews Questions Morgan Stanley Questions for 5+ Years Experience

Medium · Programming

I Used to Think System Design Diagrams Had to Look Cool. I Was Wrong

Dev.to · Flik – Software Critical Dev

🎓

Tutor Explanation

DeepCamp AI

DeepCamp AI