Information Theory

Apply entropy, KL divergence, and mutual information to ML problems.

0%

Confidence · no data yet

After this skill you can…

- Calculate Shannon entropy and cross-entropy loss

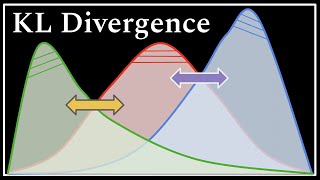

- Explain KL divergence intuitively

- Use mutual information for feature selection

Prerequisites

Watch (8 videos)

Uses of Information Theory - Computerphile

→ Analyze data using Information Theory concepts→ Design efficient data compression algorithms

Information Theory

→ Apply information theory principles to network coding→ Analyze information theory concepts

Stanford EE274: Data Compression I 2023 I Lecture 6 - Arithmetic Coding

→ General knowledge

What is NOT Random?

→ Apply information theory to real-world problems→ Analyze entropy in different systems

Lecture 16: Data Compression and Shannon’s Noiseless Coding Theorem

→ Apply Shannon's Noiseless Coding Theorem→ Understand data compression principles

Lecture 13: The Gibbs Paradox; Shannon Information Entropy; Single Quantum Particle in a Box

→ Apply Shannon Information Entropy→ Understand the Gibbs Paradox

DeepCamp AI

DeepCamp AI